Article

Modern Java Garbage Collection: Production Judgment, Evidence Collection, and Tuning Paths

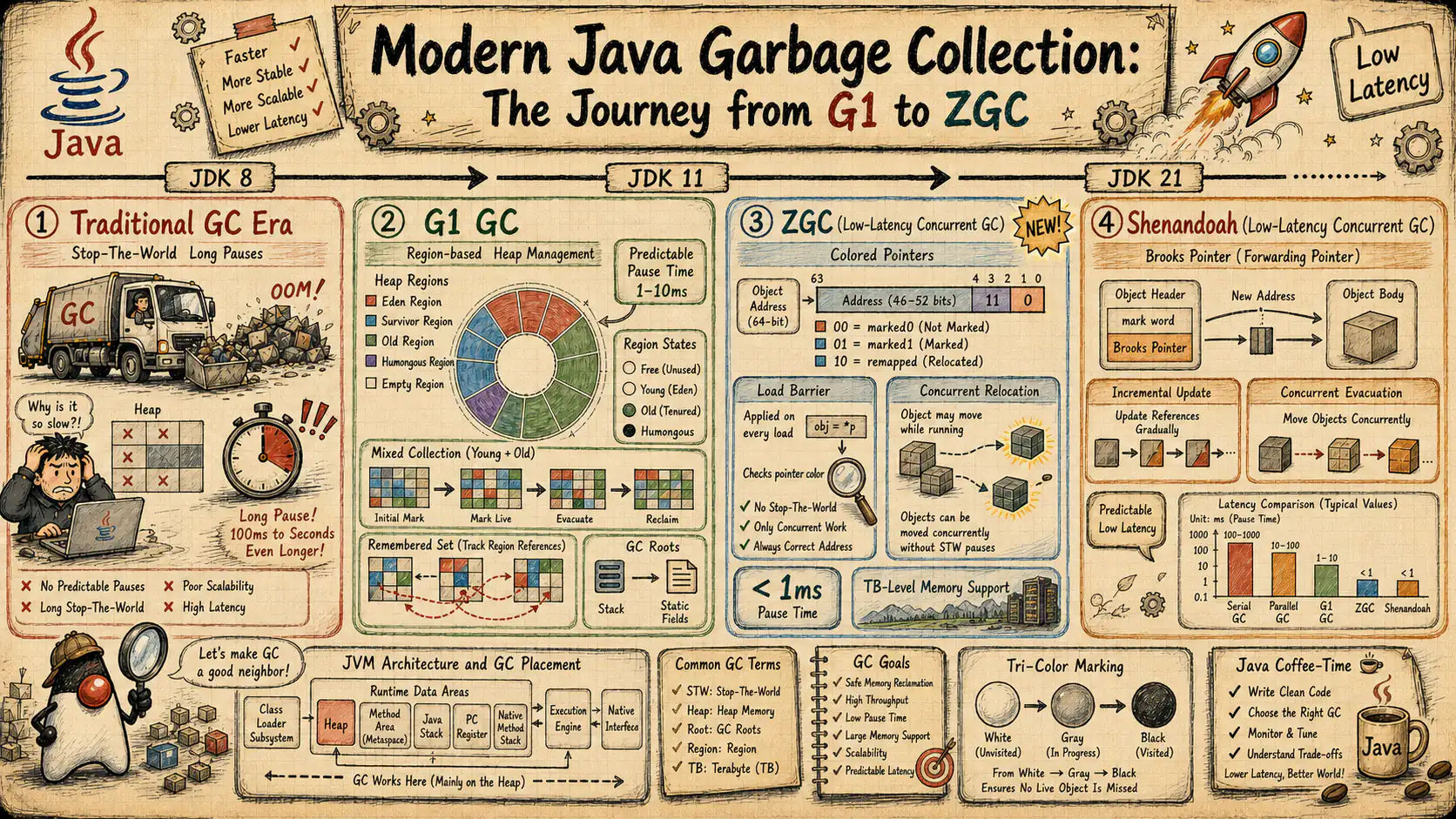

Use symptoms, GC logs, JFR, container memory, and rollback discipline to choose and tune G1, ZGC, Shenandoah, Parallel GC, and Serial GC without cargo-cult flags.

Modern Java Garbage Collection: Production Judgment, Evidence Collection, and Tuning Paths

Verification date: 2026-05-15. Statements about JDK/JEP status, collector defaults, removed modes, and GA/LTS/EA boundaries should be read against OpenJDK JEP pages, OpenJDK project pages, JDK release notes, or vendor release documentation. Any performance wording in this article is intentionally conservative and workload-bound. Claims such as “better throughput,” “lower pause,” or “more suitable for large heaps” are engineering tendencies, not portable promises across hardware, container limits, object-lifetime profiles, or vendor-specific JDK builds.

Abstract

In production, GC is not a bag of JVM flags, and it is not a quick matrix that says one collector is always right for one class of service. Within a concrete workload, JDK build, container budget, and evidence window, the real architectural question is harder: when a Java service shows throughput loss, latency spikes, CPU growth, persistent RSS expansion, container OOMKill, overly frequent Young GC, poor Mixed GC reclaim, sudden Full GC activity, or unstable p99 and p999 under load, how do you decide whether GC is actually the root cause rather than a visible by-product of something deeper?

Many teams stay stuck in the cargo-cult phase of GC tuning for years. The symptoms are familiar. They see higher latency and immediately tighten MaxGCPauseMillis. They see OOM and simply enlarge the heap. They hear that ZGC has short pauses and start treating it as an organizational default. They size -Xmx close to a container memory limit without budgeting for Metaspace, direct memory, thread stacks, code cache, native memory, and GC metadata. They blame every slow request on GC without checking safepoints, lock contention, thread-pool queueing, slow SQL, or downstream timeouts.

This article is not trying to celebrate a particular algorithm. It rebuilds a production decision path. Start from symptoms. Use GC logs, JFR, heap and non-heap evidence, and container memory evidence to decide whether GC is the root cause. Then choose the collector family according to throughput objectives, tail-latency objectives, object lifetimes, heap size, CPU budget, and rollback requirements. Finally, tune through small hypotheses, controlled load comparison, staged rollout, and continuous observation instead of cargo-culting a list of flags from the internet.

After reading, the reader should gain several durable capabilities. First, GC should be understood as a production engineering contract, not a classroom concept called “automatic memory management.” Second, the reader should know which symptoms justify suspecting GC first, and which symptoms more often come from object-lifecycle problems, pools, caches, serialization, networking, or container memory budgeting. Third, the reader should be able to read unified GC logs and JFR events for pause phases, allocation rate, promotion pressure, concurrent phase progress, humongous allocation, promotion failure, allocation stall, and safepoint relationships. Fourth, the reader should understand how heap, Metaspace, direct memory, thread stacks, code cache, native memory, and cgroup limits interact. Fifth, the reader should know the workload boundaries and misconceptions of G1, ZGC, Shenandoah, Parallel GC, and Serial GC, and connect collector decisions to rollout, rollback, and observability practice.

The article follows a production reading path on purpose: GC as an engineering contract; how symptoms become diagnostic entry points; how logs and JFR build evidence; how heap and container memory define fault boundaries; what the collector families are actually good at; how tuning workflows work; what the common collector-specific mistakes are; what a production runbook should do; and how to detect the most expensive misdiagnoses.

1. GC Is a Production Engineering Contract, Not a Flag Checklist

1.1 Define What You Are Actually Optimizing

The first step in GC tuning is not a parameter review. It is naming the optimization target precisely. Many teams say they want to “optimize GC,” but they never specify whether they are optimizing throughput, average latency, tail latency, memory footprint, CPU cost, container density, release risk, or one concrete failure mode. Without that target, there is no correct collector choice and no meaningful way to decide whether a change helped or hurt.

At a production level, at least six goals compete with one another. The first is throughput, meaning how much CPU remains available for actual application work. The second is latency, especially p99 and p999, because GC usually does not slow every request equally. The third is memory footprint, including not only heap but also metadata, evacuation headroom, remembered sets, forwarding structures, and floating garbage. The fourth is allocation efficiency, meaning how much object creation pressure the system can absorb over time. The fifth is operability, meaning whether the team can actually interpret the collector’s evidence surface. The sixth is rollout and rollback cost, because collector switches are runtime-behavior changes, not ordinary feature flags.

If the service is a trading gateway, real-time risk engine, matching system, low-latency recommendation service, or a strict tail-latency RPC service, then p99 and p999 matter far more than average pause. If the workload is batch ETL, index construction, report generation, or offline aggregation, the service may tolerate larger pauses as long as total completion time and hardware cost improve. In a multi-tenant container platform, the primary constraint may not be absolute pause time at all. It may be predictable RSS, better pod density, and lower OOMKill risk. Different goals naturally point to different collector trade-offs.

This is why the statement “the more advanced collector is better” is architecturally wrong. Advanced does not imply fit. Lower pause does not imply lower total cost. More concurrent collectors usually buy pause reductions by paying in CPU, metadata, memory bandwidth, and operational complexity. The architect’s job is not to pick the most fashionable collector. The job is to place the collector inside business constraints, hardware budgets, and a rollback-capable engineering process.

1.2 The Cost Model: Throughput, Latency, Memory, CPU, Allocation, and Tail Risk

GC cost in production cannot be reduced to “pause time.” Pause time matters, but it is never enough by itself. Many teams still carry a stop-the-world mental model from older HotSpot history, while modern G1, ZGC, and Shenandoah shift major work into concurrent phases. The problem is that concurrent work is not free. It turns one visible large pause into many smaller costs distributed across application execution: barrier overhead, concurrent-thread CPU, additional metadata, floating garbage, forwarding state, remembered-set maintenance, and concurrent marking or relocation pressure on caches and memory bandwidth.

Because of that, at least five categories of metrics must be observed together. The first category is pause metrics: Young pauses, Mixed pauses, remark phases, cleanup phases, relocation pauses, and similar collector-specific stops. The second category is concurrent metrics: whether concurrent phases keep up with allocation pressure, and whether concurrent GC threads meaningfully compete with application threads for CPU. The third category is memory metrics: whether heap occupancy after GC keeps climbing, whether old-generation usage becomes irreversible, and whether RSS keeps rising even while heap appears stable. The fourth category is allocation metrics: allocation rate, survivor pressure, promotion rate, and humongous-object frequency. The fifth category is tail-risk metrics: p99 and p999 latency, request queueing time, timeout ratios, and retry amplification.

If a service, under its own workload and evidence window, shows only a few milliseconds of average GC pause but still has unacceptable p999 latency, the question is not “why is average GC good?” The better question is whether certain phases align with traffic peaks, whether allocation spikes occur during the critical window, and whether lock contention or queue buildup amplifies those phases. Conversely, if a collector occasionally stops for tens or hundreds of milliseconds inside an offline batch pipeline but the measured total wall-clock time and cost improve for that pipeline, that can still be the correct choice. Engineering judgment always outranks metric absolutism.

1.3 Why GC Is Best Understood as a Contract

Calling GC a “contract” forces a team to admit something important: GC is not something the JVM does alone. It is a negotiated result of object lifetimes, thread models, cache policy, serialization strategy, container limits, observability quality, and release discipline. HotSpot merely executes the terms. If the service keeps unbounded caches, constructs large temporary arrays, accumulates decoded payloads in memory, sizes heap against the container limit with no native headroom, gives GC and application threads the same constrained CPU budget, and does not preserve GC logs or JFR, then the collector is being asked to uphold a broken contract.

This becomes more obvious in cloud-native environments. On a large bare-metal host, extra native memory might not kill a process immediately. In Kubernetes or another strict cgroup environment, RSS crossing the limit leads to OOMKill, not a gentle slowdown. Many teams still inspect only heap metrics and assume memory is safe. Then direct buffers, Metaspace, thread stacks, native libraries, code cache, or GC metadata push RSS past the cgroup limit. At that point enlarging the heap often makes the process die sooner.

1.4 Version Boundaries Are Part of the Contract Too

Many “GC facts” are only true inside specific JDK ranges. If you state those ranges incorrectly, an engineering guide turns into misinformation. For example, many older materials still describe G1 Full GC as a single-threaded serial path, but JDK 10 introduced Parallel Full GC for G1 through JEP 307. ZGC should not be written as a generic “experimental low-latency collector” without qualification; from JDK 15 onward it is a product feature. Non-generational ZGC should not be treated as the default mental model for modern runtimes after the generational changes in JDK 23 and the non-generational removal in JDK 24. Shenandoah has similar boundary issues, especially around generational mode, product status, and default expectations.

Therefore the article uses intentionally conservative wording. When a behavior depends on JDK or JEP status, the text says “available from version X,” “default from version Y,” “removed in version Z,” or “experimental/product status” instead of implying timeless truth. If production uses a vendor-specific build, the team should still verify against that vendor’s release notes. OpenJDK mainline truth and production distribution truth often overlap, but not always perfectly.

2. Build a Diagnostic Entry from Symptoms: First Ask Whether It Is GC, Then What Kind of GC Problem It Is

2.1 Symptoms Are Not Causes, They Are Entry Points

Production incidents do not arrive labeled “GC problem.” They arrive as business symptoms: sporadic request timeouts, gateway jitter at traffic peaks, slower batch execution, pod OOMKill, RSS that never falls during low traffic, a CPU increase relative to last week, lower node density, load-test tail latency explosions, or behavior that is fine right after deployment but unstable several hours later. Any of these can involve GC, but any of them can also come from something else entirely.

The value of a symptom is therefore not that it gives the answer. Its value is that it narrows the search space. A strong architect does not begin with “should we switch to ZGC?” The first questions are narrower and more disciplined. Is this a latency problem, a throughput problem, a memory problem, an allocation-shape problem, a pause problem, or a container-budget problem? Is it continuous, periodic, or only triggered at traffic peaks? Does it affect one version, one workload slice, one data profile, one instance, or every instance equally? Does it happen during cold start, steady state, post-deploy warming, batch entry, or exactly when a downstream service is unstable?

Only after the symptom is classified can you decide which evidence to collect first. Without that step, teams often pull GC logs, heap dumps, thread dumps, CPU profiles, slow queries, and container dashboards all at once. The result is more information but not more judgment. People still end up saying, “It feels like GC.”

2.2 Throughput Loss: GC May Be the Drag, or It May Be the Amplifier

Throughput degradation is one of the easiest classes of issue to misdiagnose because it often correlates with more GC cycles, higher CPU, and lower request capacity. That makes “GC is stealing the CPU” sound immediately plausible. But the more useful production statement is subtler: GC may be the drag, or GC may be the amplifier of an already unstable system.

If allocation rate jumps because requests now parse larger JSON payloads, create more temporary strings, build more intermediate maps and lists, or use more decompression and encoding buffers, Young GC frequency will naturally rise. That does not automatically mean a collector switch is needed. The actual correction may live in object lifetime, buffer reuse, or a different data structure. On the other hand, if object lifetimes are stable but the collector’s concurrent work clearly cannot keep up with allocation pressure, and the system starts falling back into more disruptive paths, then the collector boundary is a more credible suspect.

To judge throughput correctly, several observations must be tied together. How much total CPU is consumed, and how much of it is spent in GC threads? Does throughput decline at the same time that allocation rate surges? Does old-generation occupancy keep rising because promotion pressure is pushing long-lived state into older regions? After each cycle, is free space meaningfully restored? Beyond pause time, do concurrent marking, relocation, or remembered-set maintenance consume persistent CPU? Only when those strands align can throughput loss be assigned to GC rather than to an application design that forces GC to do too much work.

2.3 Latency Spikes: Averages Hide the Most Expensive Risk

If the service’s primary SLO is p99 or p999, then “average GC pause is only a few milliseconds” is almost useless. The production risk typically appears in the tail. One remark phase, one evacuation pause, or one humongous allocation event can align with thread-pool pressure, database queuing, network retries, and downstream jitter to create a costly SLA miss.

One common mistake is treating a single pause duration as equivalent to end-to-end request latency. In reality, GC pause is only one component. A 20 ms pause during a quiet window may have no visible business effect. The same 20 ms during a period of queue buildup, near-saturated pools, and retry fan-out can be the final push that drives a subset of requests far beyond the service contract. Architects therefore need not only the pause itself, but also the system state surrounding the pause.

That is why JFR and tracing must sit beside GC logs. GC logs show when and what kind of collector event occurred. JFR can fill in allocation, safepoints, thread states, method hotspots, lock contention, and I/O context. Distributed traces or endpoint latency metrics show whether business requests truly moved in the same window. Without those layers, tail-latency judgment is too easy to distort.

2.4 Memory Symptoms: Separate Heap, Committed Heap, RSS, and Container Kill

Many “memory problems” are really vocabulary problems. Heap used, heap committed, process RSS, container working set, cgroup usage, JVM OutOfMemoryError, and a kernel OOM kill are not the same thing. If an architect does not separate them at the language level, the engineering response is likely to be wrong.

For example, heap used may fall sharply after GC while RSS remains high. That does not necessarily mean the collector failed to reclaim memory. Committed heap may not shrink immediately. The OS may still keep pages resident. Or RSS may be dominated by Metaspace, direct memory, thread stacks, code cache, native allocators, or mapped files. Conversely, if heap used itself keeps climbing and post-GC occupancy no longer returns to a stable baseline, the problem is more consistent with retention or leak behavior.

Every memory symptom should therefore be forced through one question first: is the pressure in Java heap, GC overhead, Metaspace, direct buffer memory, native memory, or container RSS? Without that split, parameter tuning becomes a guess.

2.5 Allocation Pressure, Promotion Failure, Humongous Allocation, and Container OOMKill

In modern Java services, the most overlooked problems are often not classic “too much garbage” scenarios but changes in allocation shape and object form. A request path can keep exactly the same business semantics while producing more temporary objects, more decoding buffers, more serialization intermediates, or larger aggregation arrays. That alone can change the Young GC rhythm. If survivor and old spaces cannot absorb the new survival profile efficiently, the issue becomes promotion pressure, promotion failure, or poor mixed-collection yield.

Humongous allocation is often underestimated in the same way. In region-based collectors such as G1, large objects follow special paths. Frequent large-object allocation is not merely “more memory usage.” It can change region usage patterns, reduce reclaim flexibility, and destabilize mixed-collection efficiency. Services processing large JSON payloads, protobuf batches, compressed bundles, images, or wide in-memory buffers regularly encounter this risk.

Container OOMKill is even more dangerous because it often happens before Java throws any OutOfMemoryError. The kernel only cares whether RSS exceeds the cgroup limit. For architects, that means “the JVM did not throw OOM” is not evidence that memory was healthy. If the team only watches heap graphs, it will miss the real boundary.

2.6 A Minimal Symptom-to-Entry Table

In production, the most valuable artifact is often not the most sophisticated profiler but a decision table that helps the team narrow the issue quickly.

| Symptom | First suspect | First evidence to collect | Frequent misread |

|---|---|---|---|

| p99 or p999 spikes | GC pause, safepoint, thread-pool queueing, downstream jitter | GC log, JFR, request latency timeline, thread dump | blaming every slow request on GC |

| throughput loss at peak load | allocation rate, concurrent GC CPU, changed object lifetime | GC log, allocation profile, CPU profile, JFR | switching collectors only because GC frequency increased |

| persistent old-generation growth | cache retention, listener leak, classloader retention, promotion rate | heap dump, old-object evidence, GC log | assuming “larger heap will solve it” |

| container OOMKill | wrong RSS budget, direct/native memory, thread stacks | cgroup metrics, NMT, container events, JFR | watching only heap usage |

| more Full GC | evacuation failure, humongous pressure, insufficient reserve | GC log phase detail, region behavior | treating Full GC as a normal occasional event |

| high CPU with no obvious long pause | concurrent marking or relocation, barrier cost, application hotspots | CPU profile, JFR, concurrent phase timing | assuming short pauses mean GC is irrelevant |

The point of the table is not to replace analysis. It is to force the first move to be evidence-driven rather than instinct-driven.

3. GC Logs and JFR: How the Evidence Chain Should Be Built

3.1 Why GC Logs Are the Beginning, Not the End

Unified GC logging is the most basic continuously available evidence surface on the JVM side. It tells you what kind of collection happened, how long pauses took, how heap occupancy changed before and after, how concurrent phases progressed, and whether collector-specific warnings such as Full GC, humongous pressure, to-space exhaustion, evacuation failure, or allocation stalls appeared.

But GC logs answer only a subset of questions. They are like an incident camera focused on the collector’s point of view. They record collector events, not the whole causal chain of the service. A log can tell you, “a 40 ms pause happened here,” but it cannot by itself tell you whether that pause aligned with gateway tail latency, whether thread pools were already saturated, whether the database was unstable, or whether the application had just entered a massive allocation burst. GC logs are therefore excellent for establishing whether GC participated. They are not sufficient on their own for final root-cause judgment.

The correct approach is layered. Use GC logs to determine what the collector did. Then use JFR, latency metrics, CPU profiles, heap evidence, and container metrics to determine why it happened and whether it was the principal cause.

3.2 What to Read First in a GC Log

The common problem with GC logs is not that they lack information. The problem is that teams do not know which fields deserve attention first. Many people open the log and immediately stare at pause time, skipping the surrounding context that actually explains the incident.

A better reading order is usually this. First, identify collector type and event frequency. Frequent Young GC is not automatically bad, but if frequency rises exactly with peak traffic or reclaim quality drops, it deserves further analysis. Mixed GC with consistently poor reclaim may mean the old generation mostly contains truly live data, or that the reclaim plan is no longer matching the current object graph. Full GC should always be treated as a serious signal, not as “normal JVM behavior.”

Second, inspect before-and-after heap occupancy. Is post-GC space recovery strong or weak? Does the old generation rise monotonically? Does GC effectively clear Eden but barely move old occupancy? That distinction directs the next step toward allocation pressure, promotion behavior, or retention.

Third, inspect phase decomposition. Some incidents are not caused by total pause alone but by one specific subphase: root scanning, remembered-set scanning, reference processing, evacuation, relocation preparation, remark, and so on. Without a phase breakdown, the collector bottleneck remains invisible.

Fourth, read the collector-specific warnings. G1 calls attention to humongous allocation, to-space exhaustion, evacuation failure, and mixed-reclaim behavior. ZGC requires attention to allocation stall, concurrent progression, and mark or relocation timing. Shenandoah has its own warning vocabulary around concurrent evacuation and degraded or full paths. Each collector has different danger words. They should not be read as if they all speak the same diagnostic language.

3.3 Align GC Logs with Application Latency

The most important production step is aligning collector events with business time. A GC log may show an 18 ms pause that looks harmless in isolation. But if that 18 ms occurs exactly while the gateway is queueing, the database is slow, and retries are amplifying load, the real business impact can be far larger than the pause number. Conversely, a 60 ms pause during an offline batch task may be operationally acceptable.

Latency-sensitive analysis should therefore place GC timelines next to request latency distributions, error-rate curves, thread-pool queue lengths, connection-pool saturation, and CPU peaks. The real question is correlation and ordering. Did GC become expensive only after the system was already unstable, suggesting GC was an amplifier? Or did a collector event occur first and business metrics deteriorate immediately afterward, suggesting GC was the initiator? Those two stories demand different fixes.

3.4 How JFR Covers the Blind Spots of GC Logs

JFR matters because it does not isolate GC from the rest of the runtime. It lets you observe GC inside the broader JVM context. For GC diagnosis, JFR typically fills four kinds of blind spots.

The first is allocation evidence. GC logs reflect collection rhythm indirectly, but JFR exposes allocation behavior more directly: allocation rate, hotspot allocation paths, TLAB and non-TLAB patterns, and object samples. That helps distinguish a collector limitation from an application design that generates excessive temporary objects.

The second is thread and safepoint evidence. Many teams mislabel any safepoint or runtime pause as “GC.” JFR helps separate GC-related safepoints from other VM operations and thread-state transitions.

The third is method and CPU evidence. If concurrent GC threads truly consume meaningful CPU, JFR often makes it visible. If CPU is actually burned in business code, serialization, compression, encryption, regex, or logging, JFR helps rule out GC as the main suspect.

The fourth is I/O, locking, and external-dependency evidence. Some slow requests happen to coincide with GC but spend most of their time waiting on databases, networks, files, or locks. Only a combined runtime view prevents GC from being over-credited as the unique cause.

3.5 A Sampling Order of “Logs First, JFR Second, Dump Third”

Sampling order is itself an engineering discipline. Many teams jump to heap dumps or JVM flag changes as soon as GC is suspected. That is a costly low-signal habit. A stronger sequence is: enable or preserve unified GC logs first, confirm collector-side symptoms; record JFR second, correlate GC with allocation, CPU, thread, safepoint, and I/O behavior; then decide whether a heap dump or Native Memory Tracking is necessary; only after that should parameter changes start.

The reason is practical. Heap dumps are heavy. NMT has a cost. Parameter changes alter runtime behavior directly. If those steps come before the basic evidence chain is established, the team can easily end up with a story like “after we changed it, things got better,” without knowing whether the improvement came from the flag, traffic fluctuation, cache warm-up, or an unrelated deployment event.

3.6 A Minimal Baseline for GC Log and JFR Capture

Scenario: a service is already showing throughput, latency, or memory symptoms, and the team needs to preserve a minimum viable GC evidence surface because none exists today.

Reason: without a baseline evidence path, any collector change or parameter change becomes guesswork and misdiagnosis is likely.

What to watch: collector type, phase breakdown, before-and-after heap occupancy, and whether JFR events for allocation, safepoints, CPU, threads, and I/O can be aligned with the incident window.

Production boundary: this is a starting point for evidence retention, not a universal final production template. Log rotation, JFR duration, output location, and permissions must be adapted to platform policy.

java \

-Xlog:gc*,gc+heap=debug,gc+ergo=trace,gc+phases=debug:file=/var/log/app/gc.log:time,uptime,level,tags:filecount=10,filesize=50m \

-XX:StartFlightRecording=filename=/var/log/app/app.jfr,settings=profile,dumponexit=true,maxage=2h,maxsize=1024m \

-jar app.jarThe important part is not that every tag is identical. The important part is that the command expresses two operating principles: GC evidence should be continuous and alignable, and GC logs plus JFR should be treated as complementary evidence rather than substitutes.

4. Heap, Non-Heap, and Container Memory: Why Heap Can Look Fine While the Container Still Dies

4.1 The Memory Ledger of a Java Process Is Never Just Heap

Many GC tuning efforts fail not because the collector is poor, but because the team never built a complete memory ledger for the Java process. Java heap is only the most visible part. It is not the whole story. Teams that watch heap alone routinely create dangerous container configurations, for example by pushing -Xmx close to the memory limit and then being surprised when direct buffers, thread stacks, Metaspace, native libraries, or GC metadata push RSS over the edge.

At a production-operations level, at least the following spaces must be present in the budget: heap, Metaspace, code cache, direct memory, thread stacks, GC metadata, JIT/compiler memory, native allocator overhead, and additional native costs from mapped files, TLS, compression libraries, networking stacks, and similar dependencies. Only by budgeting all of them can you answer the real question: how much heap can this pod safely afford?

This is also why the sentence “the JVM did not throw OOM, so memory was fine” is unsafe. The kernel’s OOM killer does not care whether heap looks healthy from Java’s point of view. It cares whether total process RSS exceeds the cgroup limit.

4.2 Heap Used, Heap Committed, RSS, and Working Set Are Different Layers

Heap used is the amount of heap truly occupied by objects. Heap committed is the amount of memory the JVM has reserved from the OS for heap use. RSS is resident process memory. Container working set adds yet another runtime view through the cgroup and page-accounting lens. These layers do not map one-to-one.

For example, heap used can fall after GC while RSS remains high. That does not automatically mean “GC did not reclaim enough.” Committed heap may not shrink immediately. Resident pages may remain mapped. Or the process may be dominated by Metaspace, direct memory, thread stacks, code cache, native allocators, or mapped files. Conversely, when heap used itself climbs continuously and post-GC occupancy never returns to baseline, the issue looks much more like long-lived retention or a leak.

Memory analysis must therefore begin by naming the layer under pressure. If the team cannot answer whether the alert is about high heap used or high process RSS, it is still too early to tune GC flags.

4.3 Why Metaspace, Direct Memory, and Thread Stacks Get Misread as GC Problems

Metaspace growth often comes from classloader leaks, dynamic proxies, repeated hot-loading, plugin frameworks, or code-generation-heavy systems. It is not Java heap, but if it drives RSS growth, teams still tend to blame GC. Direct memory is common in Netty, NIO, zero-copy paths, compression, and some database drivers. Its special risk is observability. Application metrics often do not expose it by default, so the team must recover it from JVM flags, NMT, container metrics, or library-specific telemetry. Thread-stack pressure is tied to thread count, carrier threads, pool sizing, scheduling models, and other concurrency choices rather than to collector mechanics.

These spaces are misdiagnosed as GC issues because their external symptoms look similar: high RSS, pod death, service jitter, higher CPU. But the fixes are entirely different. Metaspace problems are usually classloading-governance problems. Direct-memory incidents are usually I/O and buffer-lifetime problems. Thread-stack explosions are thread-model and concurrency-governance problems. GC may get busier under the same pressure, but that does not make it the root cause.

4.4 In Containers, the Real Question Is Not “How Big Is the Heap?” but “How Is the Budget Split?”

In containers, heap cannot be chosen in isolation. It must be derived from the whole process budget. A common anti-pattern is setting -Xmx to 80% to 95% of the limit because that “maximizes utilization.” In tiny low-thread workloads that may survive. In real Java services, simultaneous growth in threads, Metaspace, direct memory, TLS or native library state, and GC metadata makes that strategy fragile very quickly.

A healthier practice is to estimate stable and peak non-heap budget first and then decide the safe heap ceiling. There is no universal constant because workloads differ too much. RPC gateways and network proxies are often direct-memory-sensitive. Reflection-heavy and proxy-heavy services can be more Metaspace-sensitive. Thread-rich services have larger stack budgets. Low-latency collectors can also keep more auxiliary metadata and concurrent headroom in play. The correct production question is therefore not “what Xmx should we use?” but “what do the peak contributions of each memory class look like, and how much safe space remains for heap?“

4.5 A Single Mental Ledger for Container Memory

To keep postmortems from collapsing every incident into “memory was high,” the process can be described with a unified ledger:

Here, is the container-level RSS budget for the Java process, is heap, is Metaspace, is code cache, is direct memory, is thread stacks, is GC metadata, is JIT/compiler memory, and is native libraries plus allocator overhead.

This is not a precise HotSpot accounting identity. It is an architectural budgeting view. Its purpose is to force the team to remember that when RSS explodes, heap is only one suspect, and when heap is under pressure, the container budget still governs the full process.

4.6 Minimal Observation Actions for Container Memory

Scenario: the service runs in Kubernetes or another cgroup environment and the team needs to confirm whether container OOM events, RSS growth, and Java process memory categories line up.

Reason: if the team watches only JVM heap, direct/native memory, Metaspace, and thread stacks remain invisible and container OOMKill gets misread as “the heap is too small” or “GC is bad.”

What to watch: the relationship between the container limit, RSS, JVM heap, NMT output, thread count, and direct-memory-related signals.

Production boundary: NMT has overhead in some environments and is not appropriate for permanent use in every peak instance. It is usually better on gray instances, in diagnosis windows, or in a pre-production replay.

jcmd <pid> VM.native_memory summary

jcmd <pid> GC.heap_info

jcmd <pid> Thread.print -locksThe value of this set is not the commands themselves. The value is that they force the discussion to separate heap, native memory, and thread-state pressure instead of hiding all of it behind the vague phrase “memory is high.”

5. Collector Families and Workload Boundaries: Do Not Select by Slogan

5.1 Serial and Parallel: Not Obsolete, Just Optimizing for Different Goals

When teams discuss GC selection, they often skip directly to G1, ZGC, and Shenandoah as though Serial and Parallel no longer matter. From a production-engineering point of view, that is a mistake because it creates the illusion that “modern” collectors are automatically better in every dimension. They are not. Serial and Parallel still have legitimate places; their optimization targets are just different.

Serial is conceptually clear: simple implementation, small heaps, minimal resources, low concurrency, or certain tool-like and short-lived processes. It is not suitable for most high-concurrency services, but in truly small tasks the overhead and complexity of more advanced concurrent protocols may not buy enough value.

Parallel GC is throughput-first by design. It accepts larger stop-the-world pauses and uses more parallel work during those pauses to maximize total application work over a longer time horizon. For offline batch jobs, ETL stages, indexing workloads, and pipelines that tolerate visible pauses but care deeply about overall completion cost, that can be a rational and efficient choice. The issue is not that Parallel is outdated. The issue is that it gets misplaced into strict tail-latency services and then blamed for doing exactly what it was designed to do.

5.2 G1: The General Server Starting Point, but Never “Safe by Default”

G1 is the default starting point for many modern JDK services not because it dominates every workload, but because it offers a balanced compromise for a broad class of server workloads: region-based heap management, pause goals, reasonable scalability across medium and large heaps, mature diagnostics, and practical compatibility with web and RPC services. Many teams do not actually need an immediate move to ZGC. They need to use G1 correctly first.

But “default starting point” must not be confused with “safe without understanding.” The danger of G1 is precisely that teams often assume a default collector requires no evidence discipline. In reality, G1 must still be understood in terms of reclaim yield, remembered-set cost, mixed-collection behavior, humongous-object pressure, and the limits of pause goals. Typical mistakes include treating MaxGCPauseMillis as a hard contract, ignoring old-generation reclaim quality, ignoring remembered-set scan costs, ignoring the effect of large-object layout on region usage, and misreading worker behavior under constrained container CPU.

If a team has not yet built stable GC log and JFR habits, G1 is often the right first station rather than a temporary stepping stone because its problems are usually easier to observe and explain. Put differently: it is often more valuable to use the general collector well than to jump to a more sophisticated one prematurely.

5.3 ZGC: Low-Pause-Oriented, but Not a Universal Cure

ZGC is attractive because it reduces the tight dependence between pause time and heap size. Through colored-pointer metadata, load barriers, concurrent marking, and concurrent relocation, it makes it easier for large-heap services to keep GC pauses within a shorter range. For services with large heaps, strict tail-latency objectives, and a willingness to pay concurrent overhead, it is a strong candidate.

But ZGC should never be reduced to the slogan “use ZGC for low latency.” It is still bounded by CPU, memory bandwidth, allocation rate, and object lifetime. Low pause does not automatically imply low end-to-end latency if the real bottleneck is queueing, the database, lock contention, or unstable downstream services. ZGC also demands a different operational mindset from G1. Teams must be prepared to maintain corresponding runtime knowledge. Finally, its version boundaries matter: product from JDK 15, generational support from JDK 21, generational default from JDK 23, and non-generational removal in JDK 24. Those facts change how logs, defaults, and mental models should be interpreted.

ZGC therefore best fits services where pause risk has already been proven to be central, heap size is large enough to matter, and the team can afford the operational and CPU complexity of its concurrent design.

5.4 Shenandoah: Also a Low-Pause Route, but Distribution Support and Team Capability Matter More

Like ZGC, Shenandoah is a low-pause-oriented collector, but it follows a different implementation path, especially through Brooks pointers, concurrent evacuation, and its barrier semantics. For architects, selecting Shenandoah should not rest on the statement “it is also low pause.” The better questions are whether the chosen distribution supports it predictably, whether the team can interpret its runtime behavior confidently, and whether the existing rollout and diagnosis process covers its degraded or fallback paths.

One practical challenge with Shenandoah is organizational familiarity. Many teams understand G1 or at least know how to start debugging G1. Far fewer teams have comparable intuition for Shenandoah logs, concurrency phases, or failure modes. That matters because a collector choice is not only a technical choice. It is also a bet on who will operate, debug, and explain that choice at 3 a.m.

Statements about generational Shenandoah should also remain cautious. It is reasonable to describe its experimental and product phases conservatively, but it is not safe to flatten those boundaries into “the modern default” or treat it as a fully interchangeable twin of ZGC.

5.5 A Production Judgment Frame for G1, ZGC, Shenandoah, Parallel, and Serial

A stronger selection process ranks collectors not by fashion but by workload goals, heap size, latency tolerance, CPU budget, container density, and team capability.

| Collector | Better fit | Primary benefit | Primary cost | Frequent mistake |

|---|---|---|---|---|

| Serial | tiny heaps, tiny tools, low-concurrency processes | simplicity, low auxiliary overhead | long pauses, weak scaling | using it for high-concurrency services |

| Parallel | throughput-first batch or offline workloads | strong total throughput, simple model | visible STW pauses | using it for strict tail-latency online services |

| G1 | general server workloads, medium to large heaps, balanced goals | mature default, strong evidence surface, balanced compromise | remembered-set cost, mixed-GC tuning effort | treating the pause goal as a promise |

| ZGC | large heaps, tail-latency-sensitive online services with CPU headroom | easier pause control, strong large-heap story | barrier and concurrent overhead, higher operational learning curve | assuming a switch will erase every slow request |

| Shenandoah | low-pause workloads with strong distribution and team support | low-pause route, concurrent collection | operational learning curve, distribution-specific behavior | treating it as “just another name for ZGC” |

The table is not a replacement for load testing. It is a replacement for slogans. If the team cannot explain why a given service belongs in one row rather than another, then a collector has not actually been selected yet.

6. The Tuning Workflow: Hypotheses, Comparison, Gray Rollout, and Rollback Instead of One Giant Flag Rewrite

6.1 Tuning Is Not Configuration Art, It Is Evidence-Governed Experimentation

Many GC tuning efforts fail not because a parameter was absurd, but because the whole process resembled alchemy. Teams change ten flags at once, deploy them directly, compare against a different traffic pattern, keep no rollback criteria, and then draw conclusions from charts that no longer isolate a single cause. Even when such tuning appears to succeed, it teaches the organization almost nothing.

A mature tuning workflow behaves like an experiment. Define the symptom and goal. Build the baseline evidence. Form a hypothesis. Change only a small number of variables. Compare under the same workload shape and the same metric surface. Then use staged rollout and rollback conditions to contain the risk. Without that chain, GC tuning becomes irreproducible even if it occasionally lands on a better result.

6.2 Sample and Reproduce Before Forming the Hypothesis

The most dangerous move is changing parameters before understanding the incident window. The better order is to gather the incident window’s GC logs, JFR, latency distributions, container metrics, and CPU behavior first; if production evidence is incomplete, build a replay or diagnostic environment that captures the same critical workload shapes. GC problems are highly dependent on allocation and survival profiles. Without a comparable workload, parameter experiments have little explanatory power.

Reproduction does not require a perfect clone of production traffic, but it should preserve the key shapes: peak allocation rate, object-size distribution, typical request concurrency, batch or burst behavior, large-object frequency, and container CPU or memory limits. Only then does an experiment deserve to inform production.

6.3 Organize Parameters Around Hypotheses, Not Hypotheses Around Parameter Lists

Good tuning starts from a hypothesis. Bad tuning starts from a parameter list. If Young GC is frequent but fast, and business latency is stable, the working hypothesis might be “high allocation rate is a normal property of this workload and is not the incident.” In that case enlarging young space merely to make GC less frequent may be pointless or harmful. If Mixed GC has poor reclaim yield, old occupancy never comes back down, and heap evidence shows long-lived cache objects, then the first hypothesis should focus on object lifetime and cache governance rather than on collector mechanics.

Collector parameters become meaningful only after the problem points at collector boundaries. In G1, you might test whether a pause goal is unrealistically aggressive, whether humongous pressure is distorting region behavior, or whether heap reserve and object layout need to be rethought. In ZGC, you might test whether concurrent work can keep up with allocation rate and whether CPU quota is sufficient. In Shenandoah, you might test whether concurrent evacuation remains stable under the exact distribution and build in use. Parameters are tools for validating hypotheses. They are not substitutes for having one.

6.4 Collector-Specific Tuning Still Has to Follow the Same Workflow

Some engineers assume that a general evidence workflow is useful only for broad issues and that collector-specific tuning can jump straight to official recommendations. In practice the opposite is true. The more collector-specific the parameter, the more carefully it should be tied back to workload evidence.

G1 pause targets, IHOP behavior, young-generation sizing, humongous-object risk, and mixed-reclaim yield all depend on allocation rate and survival behavior. ZGC headroom, concurrent progress, and allocation-stall risk depend on CPU quota and peak allocation bursts. Shenandoah degraded paths and distribution support cannot be interpreted apart from the exact build in production. The collector changes. The discipline does not.

6.5 Gray Rollout, Rollback, and Continuous Observation Are Half the Work

GC changes are runtime-behavior changes. That makes them riskier than most application configuration tweaks. Whether you are adjusting a G1 policy, switching to ZGC, or revising heap and container sizing, the change should be treated as a gray rollout candidate with explicit rollback conditions. The team should know in advance which metric regressions force rollback: p99 or p999 degradation, RSS growth, CPU elevation, error-rate movement, or collector-specific danger events.

Continuous observation also cannot stop at “GC pauses became shorter.” The real questions are broader. Did business throughput improve? Did tail latency converge? Did pod density improve? Did CPU cost rise? Did Full GC disappear? Did old-generation occupancy after GC stabilize? Did new instability patterns appear? Looking only at collector-local improvement is one of the fastest ways to end in a local optimum that hurts the service.

6.6 A Minimal Tuning Experiment Template

Scenario: the team has already shown through logs and JFR that GC is likely part of the main problem and now needs a controlled single-variable experiment for a parameter adjustment or collector decision.

Reason: without a common experiment template, teams tend to introduce many changes simultaneously and can no longer explain the result.

What to watch: p95, p99, p999, throughput, CPU, RSS, GC phases, post-GC heap occupancy, and error or timeout ratios under the same workload.

Production boundary: the template is only a skeleton for disciplined single-variable trials. Real execution still needs version identifiers, container limits, workload metadata, and explicit rollback criteria.

experiment:

hypothesis: "Poor G1 mixed-GC reclaim is correlated with increased humongous allocation"

baseline:

jdk: "25.0.x vendor-build"

collector: "G1"

workload: "peak-like replay"

change:

type: "single-variable"

variable: "object layout and batch size"

observe:

- p99_latency

- throughput

- rss

- gc_pause

- old_after_gc

- humongous_events

rollback_when:

- "p99 regress > 10%"

- "rss grow > 15%"

- "full_gc appears"The most important thing about this template is not the exact field list. It is the discipline it imposes: what exactly changed, how success will be proven, and when failure will be acknowledged and rolled back.

7. Choosing G1, ZGC, Shenandoah, Parallel, and Serial, and Avoiding Their Common Misuses

7.1 The Right Question for G1: Have You Actually Understood the General-Purpose Balance Point?

G1’s greatest strength is that it is general enough, mature enough, and observable enough to support shared operational understanding across many teams. For a large fraction of business services, the problem is not that G1 is inadequate. The problem is that the team has never really learned to reason from G1 evidence. If a service already shows stable throughput, acceptable tail latency, healthy post-GC recovery, and a realistic container budget under G1, there is no reason to switch collectors merely to appear more modern.

The classic G1 mistakes are predictable: focusing on pause time while ignoring old-generation reclaim quality, seeing humongous pressure and responding only by enlarging the heap, treating MaxGCPauseMillis as a service contract, assuming GC workers are free under container CPU limits, and ignoring remembered-set cost. Those are not separate misunderstandings. They are different expressions of the same black-box mentality.

7.2 The Right Question for ZGC: Does the Low-Pause Gain Truly Outweigh the Concurrent Cost?

ZGC is not right for every “low-latency service.” It is right for services where pause risk has already been established as a major factor, heap size is large enough to make pause scaling matter, and CPU headroom is sufficient to pay the concurrent cost. If the real bottleneck is a downstream database, lock contention, or application-object design, switching to ZGC may look sophisticated but deliver little business improvement.

The classic ZGC mistake is assuming that lower pause necessarily means lower end-to-end latency. In real systems that is almost never automatic because request latency also depends on queueing, pool saturation, network variance, thread scheduling, and data-structure behavior. The correct question is narrower: under this workload and this container budget, are the pauses ZGC reduces the exact pauses that are driving our expensive tail risk?

7.3 The Right Question for Shenandoah: Can the Organization Operate It Reliably?

Shenandoah is often compressed into the phrase “another low-pause collector,” but the operational question is more concrete: does the chosen distribution support it stably, does the team understand its warnings and fallback paths, do load tests and gray rollouts cover those paths, and is someone responsible for revalidating behavior when the JDK is upgraded? Many times Shenandoah is technically possible while the real blocker is organizational readiness.

Therefore, if the team still lacks a solid evidence chain for G1 and ZGC, Shenandoah should not be treated as a casual exploratory alternative. Collectors are not toys for runtime experimentation in critical services. They are operational commitments.

7.4 The Right Question for Parallel GC: Does the Workload Accept Pauses in Exchange for Total Throughput?

Parallel GC is often dismissed too quickly in modern discussions, as though production use automatically implies low-pause collectors. In reality, for throughput-first offline workloads and batch pipelines where total completion time matters more than request-level pauses, Parallel GC remains a rational option. Its trade-off is honest: do more work during stop-the-world windows in order to improve total work completed over time.

The mistake is not using Parallel GC. The mistake is placing it in a service whose primary promise is strict tail latency and then acting surprised that pauses are visible.

7.5 The Right Question for Serial GC: Is the Process Small Enough and Simple Enough?

Serial GC will not be the first recommendation for most modern services, but it has not vanished. Small tools, CLI processes, tiny heaps, extremely simple jobs, or constrained environments can still benefit from its simplicity. The operative word is fit. A process that does not need concurrency or a large heap does not automatically benefit from more sophisticated collector machinery.

7.6 Never Turn Collector Selection into an Organizational Slogan

One of the most dangerous architecture-governance habits is turning a collector into a slogan: “we use ZGC everywhere,” “all services should stay on G1,” “new services must use a low-latency collector.” Such slogans look efficient in management language but erase workload diversity, container-budget diversity, and SLO diversity. A mature organization should standardize the proof threshold and rollout discipline, not the mandatory collector.

The right thing to unify is “how we prove a collector choice,” not “which collector everyone must choose.”

8. Production Runbook: What the Team Should Actually Do When GC Is Suspected

8.1 Step One: Confirm the Incident Window and Business Symptom

The first step in a production runbook is never the JVM flag list. It is the incident window. When did the problem begin? How long did it last? Which instances were affected? Did it hit one class of requests, one availability zone, one data profile, or every instance? Did it align with a traffic peak, a deployment, or downstream instability? Without that window, GC analysis loses time coordinates.

At this stage the team should reduce the incident to two crucial pieces of information. First, what exactly is the business symptom: p99 timeout, throughput loss, OOMKill, elevated CPU, or something else? Second, is the symptom a sudden burst or a slow accumulation? Sudden behavior points more toward pauses, spikes, and peak competition. Slow accumulation points more toward retention, cache growth, native-memory expansion, or declining reclaim efficiency.

8.2 Step Two: Extract GC Logs, JFR, Container Metrics, and Business Metrics from the Same Window

The second action is to pull the GC log, JFR, latency distribution, error rate, RSS, heap, CPU, thread count, and pool metrics from that same incident window. The key is not “more data.” The key is time alignment. Evidence from different windows often creates false correlation.

If the current instance lacks JFR or GC logging, that is evidence of incomplete observability, not evidence that GC is innocent. The right response is not to guess. It is to improve the future evidence surface before making large runtime changes.

8.3 Step Three: Decide Whether GC Was the Root Cause, a Contributing Factor, or Only a Bystander

This is the critical step many teams skip. The evidence must support one of three roles. GC may be the root cause, meaning the collector event itself sufficiently explains the business symptom. GC may be a contributing factor, meaning the system was already near instability and GC amplified the tail. Or GC may be only a bystander, meaning it looked busy but the real source was elsewhere: retention, slow SQL, lock contention, thread-pool exhaustion, downstream latency, or native-memory growth.

Only after that role is named should the workflow continue. Otherwise teams fall into a reflex: “we saw GC activity, so we started tuning GC.”

8.4 Step Four: Separate Allocation Problems, Survival Problems, Pause Problems, and Container Budget Problems

Even if GC clearly participated, the issue still needs one more split. GC-related does not mean collector-parameter-related. The real cause may be excessive allocation, long-lived object retention, one overly long pause subphase, too many humongous objects, weak old-generation reclaim, wrong container budgeting, native-memory overgrowth, or a mixture.

This split determines whether the next action belongs in code and object lifecycle or in collector behavior and sizing. Some of the most expensive incidents persist because teams skip this distinction and use flags to compensate for object-governance problems.

8.5 Step Five: Build a Small Hypothesis and a Rollback Path

Only after the issue is sufficiently specific should collector or parameter changes begin. Whether the change is to a G1-related setting, a move to ZGC, a revised heap/container ratio, a smaller batch size, or a cache-policy change, the rollback criteria must be explicit. There is no safe place in critical systems for “we’ll deploy and see what happens.”

8.6 Step Six: During Gray Rollout, Watch Only the Few Metrics That Matter

A gray rollout should not watch everything. It should watch the critical surface: p95, p99, p999, throughput, error rate, CPU, RSS, GC phase timing, post-GC old occupancy, and the appearance of collector-specific danger events. Watching too much diffuses attention. Watching only collector-local metrics risks missing the actual business outcome.

8.7 Step Seven: Record Why It Was Not Something Else

A mature GC postmortem records not only the final change but also why other explanations were excluded. Why was this not a slow database? Why was it not thread-pool exhaustion? Why was it not direct memory? Why was it not a classloader leak? Those exclusions help the team distinguish GC as the cause from GC as visible noise during the next incident.

8.8 A Minimal Runbook Command Set

Scenario: an online service is already showing visible symptoms and the on-call engineer needs a first round of GC evidence without destabilizing the instance further.

Reason: without a common first move, some people change flags, others dump heap, and others stare only at dashboards, which fragments the evidence.

What to watch: the current collector, heap shape, short-window GC rhythm, and whether process and container memory pressure line up with runtime behavior.

Production boundary: these commands assume the necessary permissions and tooling are available. In restricted environments they should follow the platform’s approved diagnostics window and command policy.

jcmd <pid> VM.flags

jcmd <pid> GC.heap_info

jstat -gcutil <pid> 5s 12The commands do not replace full analysis, but they are sufficient for the first runbook loop because they force the team to answer basic questions: which collector is actually running, what does the heap shape look like, and is the short-window rhythm already suspicious?

9. Common Misdiagnoses and Anti-Patterns: GC Is Often Not Tuned Wrong, It Is Seen Wrong

9.1 Watching Average Pause While Ignoring Tail Latency

This is the classic anti-pattern. Attractive average pause numbers do not imply healthy p99 or p999 behavior. For online services, the expensive incidents usually live in the tail, where a small number of collector events align with queueing and contention and produce disproportionate business damage. Teams that keep telling an average-based story will repeatedly miss the incidents that matter most.

9.2 Enlarging the Heap Every Time OOM Appears

Heap growth is sometimes justified, but if the team enlarges the heap before separating Java heap OOM, Metaspace growth, direct memory, and container OOMKill, the change usually hides the real issue temporarily or makes RSS pressure worse. The first question should be “which memory class is expanding?” not “how much larger should the heap be?“

9.3 Copying JVM Flags from the Internet

The worst property of copied flag templates is not that they are always wrong. The worst property is that they are detached from workload shape, JDK version, hardware, and container policy. A parameter set that works in another system says almost nothing about object lifetime and CPU budget in your own system. Worse, many popular examples come from older JDKs or obsolete collector assumptions and can easily conflict with modern defaults.

9.4 Misreading Slow Queries, Lock Contention, or Pool Queueing as GC

GC is a visible runtime event, so it is easy to make it the scapegoat. As soon as a pause appears in the same window as a slow request, teams become eager to believe it explains everything. In production the more common reality is that the system was already close to saturation because of lock contention, thread-pool queueing, slow SQL, or downstream latency, and GC only amplified the tail. Without thread, I/O, database, and tracing evidence beside the collector evidence, misdiagnosis is almost guaranteed.

9.5 Treating Container OOM as Java Heap OOM

Containers do not wait for Java to throw OutOfMemoryError. If RSS exceeds the cgroup limit, the process can die first. Teams that watch heap alone often end up deeply confused: heap never looked full, so why did the pod die? The answer is usually that the non-heap and native budget was never modeled.

9.6 Treating a Collector Switch as “Problem Solved”

Some teams switch collectors, see a temporary chart improvement, and declare victory. A more rigorous statement is narrower: the switch changed runtime reclamation behavior. Whether it actually solved the business problem still depends on throughput, tail latency, pod density, CPU cost, release stability, and rollback complexity. A collector switch is a tool, not proof of success.

9.7 Turning Experience Ratios into Engineering Law

Phrases such as “direct memory is usually only some fraction of heap,” “this collector is always better for large heaps,” or “this pause target is the sweet spot” may be useful as starting heuristics in conversation, but they are not engineering laws. Production is context. Heuristics are only valid inside context.

9.8 Turn GC governance into platform assets, not expert folklore

If a company’s GC capability depends mostly on a few JVM specialists making judgment calls during incidents, it may work in a small team but it will not scale across many services, tenants, JDK versions, container sizes, and operating models. The most valuable expert knowledge is often not the flag name. It is knowing when not to change a flag, knowing that one symptom may have three system-level causes, and knowing that one collector may be technically attractive but operationally expensive for the current team. If that knowledge lives only in chats, incident calls, and individual memory, it cannot become organizational capability.

A more sustainable approach is to turn GC governance into platform assets. The first asset is a shared vocabulary and metric dictionary: pause, safepoint, allocation rate, post-GC occupancy, RSS, native memory, direct buffer, Metaspace, container working set, and similar terms must mean the same thing across teams. The second asset is an evidence template: GC logs, JFR, container events, business latency, thread dumps, and pool metrics should be captured in the same time window. The third asset is a change template: collector switch, heap sizing, container-limit change, and direct-memory change should all state objective, hypothesis, rollback criteria, and observation window. The fourth asset is a postmortem library that records symptom, wrong turn, evidence, real cause, correction, and applicability boundary.

These assets let ordinary service teams follow a strong path before specialists arrive. They do not eliminate experts. They productize the repeatable part of expert judgment and reserve expert attention for the decisions that really require deep experience.

9.9 Container memory budgeting should begin from the service profile, not from a fixed ratio

In Kubernetes and similar platforms, GC conversations are often reduced to “what fraction of the container limit should Xmx use?” That shortcut is convenient, but it hides too much. The correct budget starts with the service profile. Is this a request/response service, batch job, aggregation gateway, message consumer, search/indexing system, AI-SDK integration, or a mixed workload with native libraries and direct buffers? Each profile has a different non-heap shape. Thread stacks, TLS, compression, Netty buffers, JNI or FFM use, class metadata, code cache, profiling agents, and sidecars can all change RSS.

Architects should at least answer four questions. First, will a larger heap actually improve the business objective, or will it merely squeeze native memory? Second, which non-heap components grow with traffic, connections, class loading, batch size, or tenant count? Third, does the selected collector need extra metadata or concurrent CPU budget? Fourth, when the container limit is hit, will the JVM notice first, or will the platform kill the process first? Without those questions, a fixed ratio is only a simple number hiding a complex system.

This budget also belongs in capacity review, not only in JVM arguments. Many incidents appear after the service profile changes: a lightweight HTTP service adds heavy JSON compression, a synchronous service starts using a high-concurrency AI SDK, a consumer adds local caching, or an edge service accepts more TLS connections. The memory ledger changed, but the container budget did not. The visible symptom may be busy GC or a pod kill, but the deeper failure is stale budgeting.

9.10 JDK upgrades must regression-test GC behavior, not only business behavior

JDK upgrades are often treated as compatibility work: does the code compile, do tests pass, does the framework support the version, and are removed APIs gone? For GC that is not enough. A new JDK may change defaults, improve or remove modes, adjust log format, change container awareness, optimize concurrent phases, expose new diagnostics, or invalidate old operational advice. Passing business tests only proves the application still runs. GC behavior regression proves that the runtime boundary is still trustworthy.

A reliable GC regression for a JDK upgrade should include three evidence classes. The first is static fact: JDK, vendor build, container image, default collector, key flags, GC logging, and JFR configuration. The second is baseline comparison under the same workload: allocation rate, pause distribution, post-GC occupancy, RSS, CPU, Full GC, and collector-specific danger events. The third is diagnostic availability: dashboards, log parsers, alert rules, runbook commands, and JFR analysis must still work. Discovering after the upgrade that logs changed, alerts stopped firing, or parsing scripts broke is also a production failure.

Upgrade review should also avoid bundling too many runtime changes together. If the team upgrades JDK, switches collector, changes heap size, modifies container limits, and releases business code at the same time, the next incident becomes hard to attribute. The safer path is to complete the smallest upgrade first, freeze GC variables, then evaluate new capabilities with separate evidence. An upgrade is not the moment to realize every runtime wish at once; it is the moment to reconfirm the contract.

9.11 Three common incidents need different triage paths

Latency spikes are the first case. The correct entry is not “which collector is lower latency?” It is to align business p99/p999, GC pauses, safepoints, pool queueing, downstream RTT, and retry behavior on the same timeline. If the spike belongs to one request class, tenant, or batch path, object lifetime, downstream queueing, or request fan-out may be the first suspect. If it is tightly aligned with a collector phase, then the pause type and collector state deserve deeper analysis.

Container OOMKill is the second case. The correct entry is not “increase or decrease heap?” It is to decide whether the JVM threw OutOfMemoryError, whether the container event shows cgroup kill, whether RSS diverged from heap used, and whether direct memory, thread count, Metaspace, native libraries, agents, or sidecars changed. If heap is normal while RSS rises, increasing heap may make the failure faster. If post-GC heap does not come down, object retention deserves attention.

Full GC spikes are the third case. The correct entry is not to switch collectors immediately. The team must identify the trigger: allocation failure, promotion failure, metadata pressure, humongous allocation, explicit GC, class unloading pressure, or a container ergonomics interaction. Each cause has a different fix. Long-lived objects require lifecycle analysis. Humongous allocation requires object-size and batching analysis. Explicit GC requires library or script review. Metadata pressure requires class-loading investigation. Treating Full GC as one generic event leads to broad and ineffective tuning.

9.12 Maturity model: from firefighting, to standardization, to prevention

GC governance has at least three maturity levels. The first is firefighting. Teams only discuss GC during incidents, and there is no standard log, no JFR retention, no container memory ledger, no collector review, and no common runbook. This stage does not need sophisticated tuning first; it needs minimum observability and a shared triage order. The second level is standardization. Teams have default GC logs, JFR, dashboards, memory-budget templates, change workflow, and postmortem rules. The main challenge here is avoiding a single template for every service profile. The third level is prevention. The platform can surface GC risk during capacity review, JDK upgrade, service-profile change, dependency adoption, and gray rollout.

The key transition is to move GC from incident response into architecture review. When a service adds local caching, the review should ask about object lifetime and eviction. When an AI SDK is introduced, the review should ask about streaming, buffers, TLS, JSON, and vector-result objects. When a gateway accepts more connections, the review should ask about direct buffers, threads, heap, and native memory together. When the JDK is upgraded, the review should ask whether GC behavior evidence is frozen for that version.

This is why production GC requires technical perspective beyond parameter knowledge. GC is not an isolated JVM feature. It is a system capability built from object design, runtime version, container platform, capacity planning, observability, incident process, and organizational learning. After reading this article, the reader should not only remember collector names. They should be able to classify symptoms, request the right evidence, avoid risky actions, qualify conclusions by workload, and decide which lesson should become a platform asset.

9.13 In multi-tenant services, GC risk is mainly isolation and attribution

GC incidents in multi-tenant systems are harder than in single-tenant services because object lifetime, request size, cache hit behavior, batch work, and downstream waiting may all skew by tenant. One large tenant’s import, report export, or large-object query can change allocation rate and old-generation survival for the entire instance, affecting other tenants on the same process. Instance-level GC metrics will only say “this pod is busy.” They will not say which tenant or action made it busy. Without attribution, tuning becomes global resource increase or global flag change, making every tenant pay for a few abnormal patterns.

The first rule is to drill memory risk down from instance to tenant, action, and data shape. The team needs to know which tenants allocate the most, which endpoints create large result sets, which caches grow by tenant, and which batch paths turn short-lived objects into long-lived objects. The second rule is to place isolation before collector tuning. Rate limits, pagination, batch-size control, tenant cache quotas, async-job quotas, instance sharding, and hot-tenant relocation often matter more than GC flags. The third rule is to make reclamation pressure part of fairness governance. If one tenant’s behavior raises global GC cost, the platform should attribute the cost rather than seeing only JVM noise.

9.14 Large objects, serialization, and AI SDKs move GC risk across traditional service boundaries

Modern Java services increasingly handle large JSON documents, large responses, compressed payloads, vector-search results, model-call context, embedding batches, streaming token buffers, tool-call audit logs, and tracing payloads. These objects may not be normal business entities, but they still enter heap, direct buffers, TLS/native layers, or serialization internals. A team can therefore see a new form of GC pressure: business caches did not grow, database object lifetime looks ordinary, yet after an SDK or large-response path was added, allocation spikes, humongous allocation, direct memory, RSS, and tail latency all worsen.

The right entry is data shape rather than collector choice. How many intermediate objects can one request create? Can the response be streamed? Can parsing be chunked? Can the full body avoid materialization? Can the large object’s lifetime be kept near the stack? Can audit logs move off the synchronous path? Can one tenant or one request have a context-size budget? For AI SDK workloads, token buffers, conversation memory, tool results, vector results, and tracing payloads need budgets. Many GC problems are not cases where Java cannot reclaim memory. They are cases where the system created too many objects that then had to be reclaimed.

9.15 GC SLOs should cover business result, runtime signal, and operability

A common enterprise mistake is to define SLOs for business endpoints but not for runtime capability. A GC SLO should not simply say “pause must stay below this fixed number.” It should be written by service type and business objective. Online transaction systems care whether GC amplifies tail latency and error rate. Batch systems care about throughput and completion time. Aggregation gateways care about queueing, downstream waiting, and object peaks. AI orchestration services care whether context size, streaming buffers, and model-call waiting create memory volatility. Different service classes can have different GC SLOs, but each should combine business outcome, runtime cost, and diagnosis capability.

A stronger GC SLO has three layers. The first is business impact: p99, p999, error rate, task duration, and tenant impact. The second is runtime signal: pause distribution, allocation rate, post-GC occupancy, RSS, Full GC, and collector-specific events. The third is operability: whether the incident window contains GC logs, JFR, container events, and a business timeline; whether rollback criteria are explicit; and whether the conclusion can explain why it is valid. Without the third layer, SLOs become dashboard numbers. With it, they become an executable production contract.

Making GC SLOs executable inside an enterprise process also requires explicit ownership. Application teams own object lifetimes, cache policy, batch size, and request data shape. Platform teams own JDK versions, collector defaults, container memory limits, CPU request and limit policy, diagnostic switches, and image baselines. SRE owns the alignment of business latency, GC logs, JFR, container events, node pressure, and release events inside one incident window. Only when those responsibilities are written into change templates before incidents, invoked during incidents, and reviewed after incidents does a GC SLO become more than a few thresholds on a dashboard. A useful GC SLO should answer who may trigger a collector change, who confirms that business risk actually decreased, who decides whether the new baseline can remain, and who turns one incident into the next capacity-review or code-review checklist. Without that governance layer, tuning remains an expert’s one-off intervention. With it, GC becomes a repeatable production capability.

GC SLOs should also define review triggers, not only post-incident updates. Major JDK upgrades, collector switches, container-size changes, connection-pool or cache-policy changes, adoption of large-object SDKs, significant tenant growth, rebuilt load-test models, and hardware-generation changes should all trigger a lightweight review. The review does not always lead to new flags, but it must confirm that the earlier assumptions still hold: object lifetimes have not shifted, RSS budget remains safe, JFR and GC logs are still available, and gray-rollout metrics still cover the new risk surface. This prevents a once-correct GC strategy from quietly becoming wrong after the environment changes.

10. Conclusion: GC Matters Not Because It Can Be Perfectly Tuned, but Because Its Risk Can Be Observed, Explained, and Rolled Back

The real value of Java GC is not that every service can be driven to some abstract optimum. Its value is that memory-management risk can be continuously observed, correctly explained, changed in small steps, and safely rolled back. As long as teams still discuss incidents using phrases such as “it looks like GC,” “the internet says ZGC is better,” “add more heap,” or “shorter pauses mean success,” GC is still trapped in folklore rather than engineering.

A stronger practice restores GC to its proper role. It is a production contract jointly shaped by object lifetime, service SLOs, container budgets, CPU quotas, collector behavior, and team operability. The health of that contract is not judged by slogans but by evidence. Collector choice is not driven by trend but by objective and workload. Parameters are not copied but tested against hypotheses. Rollouts do not depend on luck but on gray deployment and rollback discipline.

When architects build that path, GC stops being the most mysterious corner of Java performance work and becomes a system the team can reason about methodically. The goal worth pursuing is not the most flattering pause number on one benchmark chart. The goal is a system that remains observable, explainable, and controllable as traffic, versions, containers, and business behavior evolve.

References

- OpenJDK JEP 307: Parallel Full GC for G1.

- OpenJDK JEP 377: ZGC: A Scalable Low-Latency Garbage Collector.

- OpenJDK JEP 439: Generational ZGC.

- OpenJDK JEP 474: ZGC: Generational Mode by Default.

- OpenJDK JEP 490: ZGC: Remove the Non-Generational Mode.

- OpenJDK Shenandoah project pages and relevant JEP or release-note material.

- Oracle or OpenJDK HotSpot GC tuning guides and JDK release notes.

- Official Java Flight Recorder and HotSpot diagnostics documentation.

Series context

You are reading: Java Core Technologies Deep Dive

This is article 2 of 8. Reading progress is stored only in this browser so the full series page can resume from the right entry.

Series Path

Current series chapters

Chapter clicks store reading progress only in this browser so the series page can resume from the right entry.