Article

Concurrency Governance with Virtual Threads in Production Systems

Understand throughput, blocking, resource pools, downstream protection, pinning, structured concurrency, observability, and migration boundaries for Project Loom.

Abstract

Project Loom gives Java a practical way to keep direct, synchronous application code while removing much of the platform-thread cost of blocking waits. What it lowers is the cost of waiting in the JVM. What it does not automatically raise is database capacity, remote API quota, CPU throughput, storage bandwidth, cache headroom, or message-system partitions. That distinction is the first production lesson. Teams that treat virtual threads as unlimited concurrency often rediscover the same resource limits they already had, only deeper in the stack and later in the incident timeline.

In production architecture, Loom is not a slogan about “one million threads.” It is a shift in where concurrency governance must live. In the platform-thread era, expensive threads forced teams to put coarse controls at the thread-pool boundary. Those controls were imperfect, but at least they made scarcity visible. In the Loom era, requests can enter the service more easily, blocking code becomes cheaper to express, and the main governance burden moves toward explicit budgets: connection pools, semaphores, rate limits, bulkheads, deadlines, cancellation, queue discipline, and rollback strategy.

This article therefore treats virtual threads as a production-governance topic rather than only a language/runtime feature. The central question is not “how do I create virtual threads?” but “when waiting becomes cheap, how should an architect redesign concurrency control, downstream protection, observability, and migration strategy without mistaking virtual threads for unlimited throughput?” After reading, the reader should be able to decide where virtual threads fit, where platform threads or reactive pipelines still make more sense, how to protect downstream resources, how to diagnose pinning and starvation, and how to migrate service code without creating new overload paths.

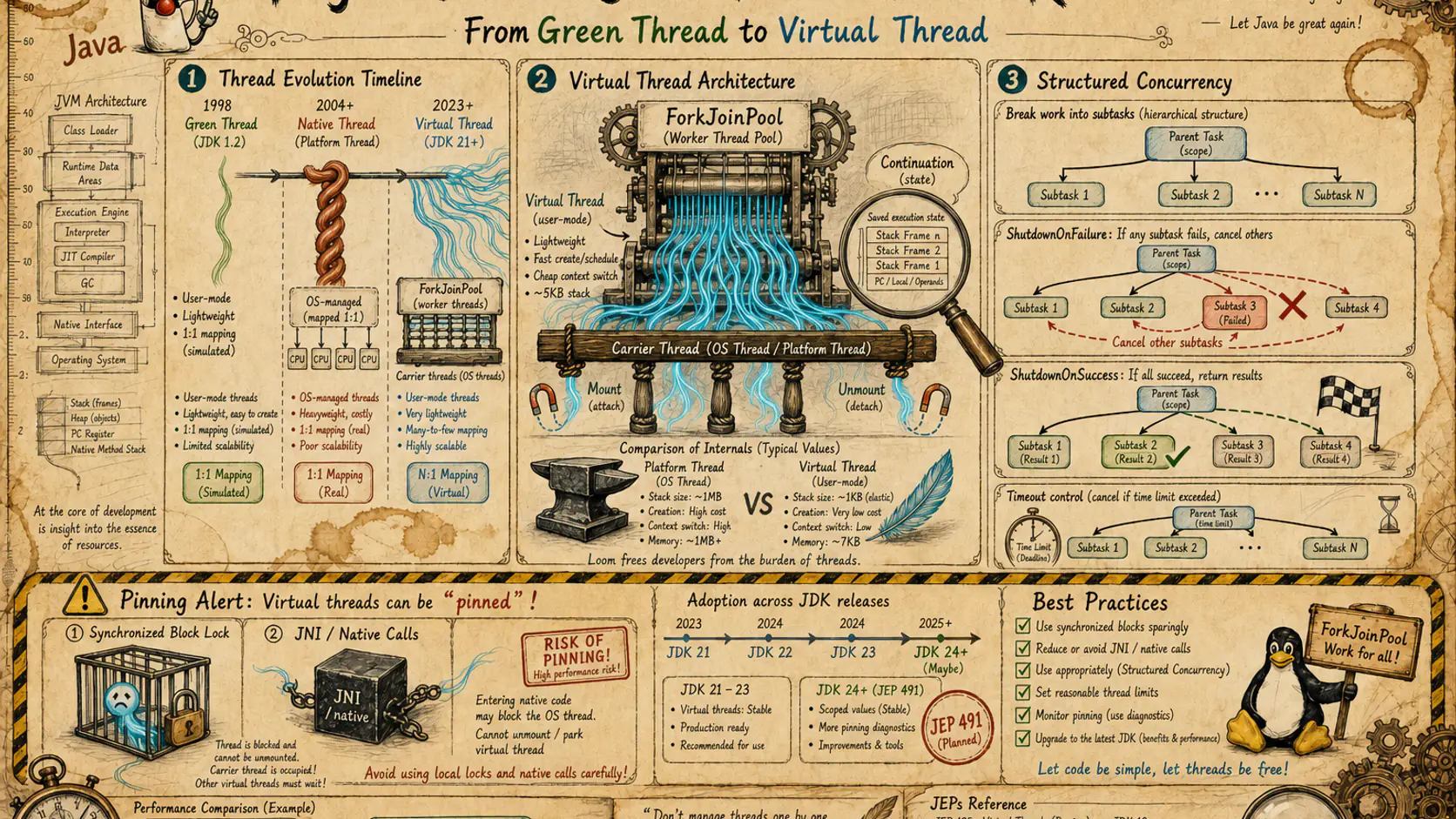

Version language matters. Virtual threads are final from JDK 21. Structured concurrency is still on the preview line at the time of writing and must be described with explicit version and preview-flag context. The relationship between synchronized and pinning is materially different in newer JDK lines than in JDK 21 to 23. For that reason, this article uses conservative wording for any fast-moving claim: every statement about status, behavior, and scope should be read as version-bounded, workload-bounded, and evidence-dependent.

The reading path of this article is also intentionally different from many Loom introductions. Introductory material often starts with a demo that creates a very large number of waiting virtual threads. That is useful as proof that waiting representation is cheap. It is not sufficient as production judgment. Real systems need the second-order discussion: what becomes the real bottleneck when the JVM stops paying a high thread cost, which metrics lose explanatory power, how deep queues move, how fan-out gets amplified, what structured concurrency adds, and what a safe migration sequence actually looks like.

1. The Production Problem with Virtual Threads: Why Cheaper Threads Demand Stronger Governance

1.1 Lower thread cost does not mean system capacity scales with it

The most common production misunderstanding is to equate virtual threads with “infinite concurrency.” That usually comes from two shortcuts. The first is treating thread count as if it were the service’s true capacity. Engineers who spent years in fixed-size thread-pool systems often internalized the idea that “number of threads” roughly equals “number of requests the system can safely hold.” Once thread cost stops being the first bottleneck, they assume capacity also expands. The second shortcut is confusing a demo workload with a production workload. A virtual-thread demo that mostly sleeps can scale very far because it consumes little real downstream capacity. Real requests consume connections, sockets, TLS sessions, buffers, quota, transactions, and application memory.

So the first production distinction must be explicit: thread cost and system capacity are different variables. Thread cost asks how expensive it is for the JVM and operating system to represent a waiting task. System capacity asks how much end-to-end work the service and its dependencies can sustain without unacceptable tail latency, timeout growth, or downstream instability. Loom improves the former. It does not automatically improve the latter.

1.2 Thread pools used to play a hidden admission-control role

That distinction creates a new operational burden. In the platform-thread era, a thread pool often acted as a crude but visible admission gate. When the pool filled up, the system queued or rejected. With virtual threads, requests can enter more easily. If there is no additional limit at the API gateway, queue, semaphore, or downstream budget layer, the queue simply moves deeper: into the database pool, the RPC client, the cache client, or the message pipeline. The concurrency problem did not disappear; the surface where it becomes visible changed.

This also means that teams can no longer rely on thread saturation as an early warning signal. Under Loom, connection-borrow time, downstream timeout ratio, request fan-out depth, cancellation effectiveness, and carrier utilization become more meaningful than raw “active worker thread” counts in many services.

1.3 Organizational responsibility changes as well

Virtual threads also shift responsibility boundaries across teams. In a heavily asynchronous codebase, everyone can see that the service uses a special concurrency model. In a Loom-based service, code often looks like ordinary synchronous business logic again. That is good for readability, but dangerous if the organization mistakes “looks simple” for “is self-governing.” Timeout policy, cancellation, downstream budgeting, idempotency, and fallback logic do not disappear just because the source code becomes direct-style.

The result is a new organizational risk: teams may lower their guard precisely because the code is easier to read. If the platform team does not provide budget primitives, deadline propagation, common diagnostics, and rollback switches, every service team will reinvent a different partial governance model. That is how a runtime feature becomes an operational inconsistency problem.

1.4 The real skill is budget governance, not API familiarity

For that reason, the starting point of Part 3 is not the Loom API surface. It is a governance question. Once blocking becomes cheaper, the main managed object shifts from “threads” to “budgets”: CPU budgets, connection budgets, call budgets, retry budgets, queue budgets, timeout budgets, error budgets, memory budgets, and operational budgets. Virtual-thread adoption is therefore less a story of “new concurrency syntax” and more a story of “explicit capacity governance.” That is the lens through which the rest of the article should be read.

1.5 Why the entry point looks calmer while downstream risk grows

One of the first visible effects of Loom is that the entry point often looks calmer than before. The service can accept more requests before a thread pool becomes visibly constrained. Teams sometimes read that as capacity growth itself. In reality, it often means that pressure moved deeper into the system. The application can still accept work, but the database pool may already be queueing, the HTTP client may already be fighting for connections, and a third-party API budget may already be nearing its limit. A platform-thread pool used to expose overload early and crudely. Loom can make overload later, deeper, and therefore easier to miss.

That matters in incident response. A service can keep returning some successful responses while its deep dependency budgets are already deteriorating. Tail latency grows, retries increase, transaction hold time expands, and low-value work continues in the background. If the organization still uses entry-level smoothness as the main success signal, it will discover Loom-era overload too late.

1.6 Team roles must be rewritten around the new bottleneck surface

The long-term organizational response is not to ban Loom, but to realign responsibility. Architects need to describe where Loom fits and where it does not, platform teams need to provide common budget and observability primitives, service teams need to encode request fan-out and value-aware failure semantics explicitly, and operations teams need to alert on resource waits rather than legacy thread-pool saturation alone. If those role updates do not happen, Loom lowers coding friction while raising systemic risk.

2. Concurrency Model Reorientation: Recutting the Boundary Between Platform Threads, Reactive Pipelines, and Virtual Threads

2.1 Platform threads remain semantically simple, but expensive for long waits

Java already had multiple concurrency answers before Loom. Platform threads, fixed thread pools, CompletableFuture, Netty event loops, reactive streams, and actor-style designs all tried to solve the same broad problem: how to sustain concurrency under finite cores, blocking I/O, and business workflows that still need to progress in parallel. Loom does not invalidate every earlier answer. It redraws their boundary of best use.

Platform threads remain attractive because they match developer intuition. One request, one thread, readable call stacks, direct error propagation, and debuggers that match the source code. Their production weakness is that waiting and thread occupancy are coupled. If the request waits on a remote call for 100 milliseconds, the platform thread remains allocated through that wait. In I/O-heavy services, that becomes an expensive way to represent inactivity.

Thread pools mitigate creation cost and create a capacity gate, but they also conflate multiple concerns: execution reuse, backpressure, isolation, and queueing. A thread pool can prevent one kind of overload while simultaneously hiding another. Loom changes that tradeoff because execution reuse matters less once waiting tasks become cheap to represent.

2.2 CompletableFuture reduced wait cost but increased control-flow cost

CompletableFuture and other asynchronous patterns improved I/O concurrency by removing waits from expensive platform threads. That solved a real capacity problem. But it also fragmented business control flow. Straight-line failure paths became callback composition, context propagation became a design task, cancellation became optional rather than obvious, and debugging often moved away from business frames toward framework frames.

This is not simply a matter of teams “learning async better.” In long-lived enterprise systems, the real difficulty is often not starting concurrency, but understanding the failure and recovery path months later. Direct-style code therefore matters operationally, not only aesthetically.

2.3 Reactive is still the right answer when stream semantics and backpressure are first-class

Reactive design remains powerful when the problem itself is a stream. Long-lived pipelines, event-driven ingestion, infinite data flows, WebSocket broadcasting, and systems that need explicit cross-boundary backpressure still benefit from reactive semantics. In those cases, the key value is not “few threads,” but the ability to model producer/consumer rates, streaming transformations, and scheduler boundaries directly.

That distinction is crucial. Loom reduces the number of request/response services that need reactive code only because platform threads were too expensive. It does not make stream semantics disappear, and it does not replace reactive backpressure with magic.

2.4 Virtual threads are strongest as a direct-style I/O orchestration layer

Virtual threads are strongest in blocking request/response services where waits dominate, CPU work is modest, and readability matters. Database access, cache calls, HTTP client calls, file I/O, and ordinary service aggregation fit this model well when downstream budgets are explicit. CPU-bound work remains better served by bounded platform-thread execution. Long-lived streams often remain better in reactive/event-driven form. Mixed architectures are normal and healthy.

This means concurrency is no longer a monolithic all-or-nothing stack decision. A service can use virtual threads at its HTTP edge, structured concurrency for request fan-out, bounded platform pools for CPU-heavy report generation, and an event-driven model for background stream processing. The real question is no longer “Which model wins?” but “Which model best expresses the governing resource boundary in this part of the system?”

2.5 Architecture review questions must change

Before Loom, teams often asked whether they needed reactive infrastructure to scale Java to high concurrency. After Loom, the better question is narrower and more useful: is the dominant cost in this path waiting, stream semantics, or CPU parallelism? If it is waiting, direct-style code under explicit budgets may now be the simplest correct answer. If it is stream semantics, keep the reactive/event-driven model. If it is CPU, Loom is rarely the first optimization lever.

The main architectural gain here is not uniformity. It is correctness of abstraction. A team that forces a single concurrency ideology onto every service tends to pay either unnecessary cognitive cost or hidden resource cost.

2.6 Mixed-model architecture is usually more realistic than full replacement

Many enterprise systems include APIs, scheduled jobs, stream processors, message consumers, and batch workloads in the same platform. Their bottlenecks and failure semantics differ. Loom is valuable because it lets some of those boundaries return to direct-style code without forcing every boundary to adopt the same model. The goal is not stack purity. The goal is consistent resource governance across different concurrency forms.

2.7 Model selection should be driven by bottleneck shape, semantics, and team capability

A useful selection conversation therefore starts with a small set of questions. Is the path dominated by waiting or CPU? Does it need long-lived streaming semantics? Must backpressure cross component boundaries? Does the team have strong observability and debugging maturity for the current model? Would direct-style code materially reduce control-flow complexity without hiding budget risk? Loom is usually a strong answer only when those questions point toward I/O-heavy, business-logic-heavy, direct-style orchestration.

3. Throughput and Capacity Modeling: Virtual Threads Do Not Replace Capacity Planning, They Move the First Bottleneck

3.1 The true bottleneck has never been “thread count” alone

The most dangerous extrapolation is to treat “many parked virtual threads” as if it implied proportional throughput growth. Real production throughput is the minimum of multiple resource gates: database connections, remote-service quota, HTTP connections, disk throughput, CPU, memory pressure, cache behavior, and sometimes regulatory or tenant-level limits. Loom removes one major cost from that chain, but it does not erase the others.

So the correct first question is not “How many virtual threads can I create?” It is “What resources does one request consume end to end, and which of those have the lowest safe ceiling?” In many business services, the answer is not the JVM thread representation. It is the connection pool, the downstream concurrency allowance, the retry budget, or the CPU of a specific shared dependency.

3.2 Waiting time and execution time should be modeled separately

Virtual threads shine when waiting dominates and CPU work is comparatively small. If the request spends 5 milliseconds computing and 120 milliseconds waiting on databases and remote services, the value proposition is strong. If the request spends 200 milliseconds in CPU-heavy encryption, compression, reporting, or local computation, virtual threads do not create extra cores. In that case they may improve code uniformity, but not actual compute throughput.

That separation also clarifies what needs to be optimized. If waiting dominates, budget control, deadlines, cancellation, and dependency protection matter most. If execution dominates, work partitioning, platform-thread limits, locality, allocation, and JIT/runtime profiling matter more.

3.3 Entry concurrency and downstream concurrency are different numbers

Another essential distinction is between entry concurrency and downstream concurrency. Entry concurrency counts requests accepted into the service. Downstream concurrency counts active demand placed on databases, caches, RPC clients, queues, or APIs. Loom makes entry concurrency easier to increase because accepting a request no longer reserves an expensive platform thread. But if each request now fans out into more downstream calls, downstream concurrency may grow far faster than entry concurrency itself.

That amplification already existed before Loom, but thread pools often constrained it earlier and more crudely. Once that coarse limit disappears, fan-out budgets must become explicit or they will show up as deep overload.

3.4 Fan-out ceilings must become rules, not assumptions

A serious Loom capacity model should define at least these elements: maximum request-level fan-out, dependency-level concurrency budgets, queue behavior when budgets are exhausted, timeout composition, cancellation semantics for no-longer-valuable work, and error/retry budgets. None of those are new concepts. What is new is that Loom removes one accidental guardrail and therefore makes all of them more visible and more necessary.

3.5 Little’s Law still applies, but its operational interpretation changes

Concurrency equals throughput multiplied by time in system still holds. What changes is what engineers should observe. Concurrency is no longer approximated by thread count. It is the number of active work units consuming useful or waiting resources. And the “time” that matters most is rarely the average; it is the tail, where queueing and dependency contention appear first.

3.6 Metrics must shift from thread-centric to wait-centric

Under Loom, teams should watch connection-borrow latency, HTTP connection acquisition time, downstream RTT distribution, request fan-out depth, virtual-thread lifetime distribution, cancellation effectiveness, and carrier CPU behavior at least as closely as legacy thread metrics. Raw thread count alone explains less than it used to.

3.7 Write the capacity equation as a three-layer budget

In practice, capacity planning becomes clearer when written as a three-layer budget. Layer one is entry admission: what volume or tenant mix is the service willing to accept? Layer two is request-internal concurrency: how many child operations may a request spawn, and which of them are mandatory versus optional? Layer three is dependency concurrency: how many active database, cache, HTTP, and message operations are allowed, and what is the timeout and queueing behavior when those budgets are exhausted? Loom does not remove the need for this equation. It makes the absence of this equation more dangerous.

3.8 Load tests, canaries, and production observation must validate budget migration

A Loom validation plan should therefore ask more than “Does the service still pass at N QPS?” It should verify where queueing appears first, how cancellation behaves, whether retries amplify pressure under stress, whether carrier threads are unexpectedly held, and whether downstream saturation now occurs earlier because the entry point admits work more smoothly. Canaries must look not only at user-visible success, but also at deep metrics that may reveal budget migration before full incidents appear.

3.9 Capacity assumptions should live in ADRs and runbooks, not only in old test reports

If Loom changes the effective bottleneck position, then the assumptions behind that change should become long-lived operational assets. Test results that say “the service was stable at 3,000 QPS” are weak if they do not also preserve the dependency ceilings, retry posture, timeout policy, and batch interference assumptions that made the result true. Moving those assumptions into architecture decisions and operational runbooks helps prevent later teams from drifting out of the tested safety envelope without noticing.

4. Resource Pools and Backpressure: Why Virtual Threads Cannot Bypass Connection Pools, Rate Limits, or Timeouts

4.1 Connection pools are not legacy leftovers, they are real capacity gates

In the Loom era, resource pools matter more, not less. A thread pool used to mix execution reuse and rough concurrency control. Virtual threads reduce the importance of execution reuse for waiting tasks, so concurrency control should move closer to the actual scarce resource: database pools for databases, HTTP connection pools for remote services, cache-client budgets for cache pressure, consumer concurrency for message systems, bulkheads for blast-radius containment, queues for burst absorption, and timeouts for bounded waiting.

That is why removing old thread-pool constraints without adding explicit resource budgets is so dangerous. You are not making the system freer. You are making the real bottlenecks easier to hit.

4.2 Backpressure is still necessary, it just moves form

Some engineers assume that because the code is synchronous again, backpressure becomes a “reactive-only” concern. That is false. Backpressure is simply the system’s ability to signal that demand exceeds safe capacity. In a reactive graph it may be encoded as a protocol. In a Loom-based service it is often expressed through semaphores, bulkheads, finite queues, connection-borrow failure, deadline expiry, rate limiting, fast rejection, or explicit degradation. The form changes. The need does not.

4.3 Budgeting should be layered: entry, service-internal, and dependency-side

A resilient Loom service usually budgets at three levels: entry-level acceptance, service-internal fan-out, and dependency-side concurrency. Entry-level budgets decide what work enters the system. Service-internal budgets decide how much one request can amplify itself. Dependency budgets decide what downstream load is safe. Skipping any of those layers tends to produce incidents that are hard to explain because the pressure is transferred rather than prevented.

4.4 Exhausted budgets need explicit failure semantics

When a pool or budget is exhausted, the system needs a rule: fail fast, wait briefly, return a degraded result, or defer the work. The correct answer depends on business value and request type. If the parent deadline is already nearly consumed, even a technically available downstream budget may no longer be worth spending. Loom makes it easier to write direct-style branching logic for these cases, but it does not invent the business rule for you.

4.5 The most dangerous pattern is unlimited acceptance plus deep waiting

One of the worst production designs is to accept almost everything at the edge, create virtual-thread work freely, and let each task queue deeply inside pools and client waits until timeouts cascade. That design often looks deceptively healthy at the entry layer while it is already burning deep dependency budgets. Under Loom, this anti-pattern is easier to write because blocking code feels harmless again. It is not harmless unless bounded by explicit policy.

4.6 Different dependencies need different budget styles

Not every dependency budget should look the same. Databases care about connections, transactions, lock duration, and slow queries. Cache layers care about hot keys, command timeout, and stampede behavior. HTTP/RPC systems care about connections, handshake cost, downstream quota, and retry coupling. Message systems care about consumer concurrency, acknowledgment semantics, and retry/dead-letter policy. A production Loom design should preserve those differences instead of hiding them behind one generic “concurrency limit” concept.

4.7 Timeouts, retries, and queues form one system

Timeout, retry, and queue rules are also coupled. Long borrow waits plus generous deadlines can let deep queues grow invisibly. Retries that ignore the total request budget can amplify a minor dependency wobble into a self-induced storm. Queue rules that do not distinguish high-value from low-value work can cause critical requests to wait behind batch or low-priority traffic. These combinations existed before Loom, but Loom makes them easier to ignore because entry acceptance becomes smoother. The right response is to design them together, not separately.

4.8 Budget rejection should be value-aware

A high-value interactive request may be better served by fast rejection than by long hidden waiting. A non-critical page fragment may be better served by partial success. A night batch may be better served by intentional slowdown. A low-value internal report may deserve a lower-priority queue. Under Loom, value-aware rejection becomes more important because direct-style code makes it easy to over-admit work unless the business rules are encoded explicitly.

5. Pinning and Version Boundaries: Why Carrier Threads Get Held and Why Some Old Advice Is Now Incomplete

5.1 Pinning means waiting without releasing the carrier

Pinning is often oversimplified into a one-line warning. The more precise description is that a virtual thread cannot safely unmount at an expected blocking point, so the carrier platform thread remains occupied longer than the scheduler would ideally allow. The cost is not necessarily immediate failure. It is degraded scheduling efficiency and lower effective carrier reuse.

5.2 synchronized advice must be version-aware, not eternal

In JDK 21 to 23, blocking under certain monitor scenarios could be a major pinning concern. Newer JDK lines improved that story, especially around releasing carriers in monitor-related waits. That means blanket advice such as “replace every synchronized block before using Loom” is now too coarse. The correct production rule is version-aware: inspect long waits under synchronization, distinguish old from newer JDK behavior, and prioritize evidence over slogans.

That said, even if monitor-related pinning improves, long critical sections remain an architectural problem. A lock that encloses remote I/O, disk access, heavy serialization, or expensive business waits is still likely to reduce useful concurrency, even if the exact carrier behavior has changed.

5.3 Native calls, foreign calls, and black-box drivers still deserve extra suspicion

The JDK understands more and more blocking points, but JNI, some native libraries, some foreign-call paths, and some old drivers may still behave like opaque waiting regions from the scheduler’s perspective. If your production path depends on compression libraries, image processing, database drivers with legacy behavior, encryption modules, or foreign/native integration, Loom readiness must include targeted tests and JFR evidence rather than assumption.

5.4 Fixing pinning is often about boundary design, not only lock replacement

Pinning is frequently a symptom of boundary design. If a long remote wait occurs while shared state is held, the deeper problem may be that external waiting and state protection are in the same execution region. If one request has to hold a lock across multiple dependency calls, the problem may be the state machine shape rather than the lock primitive. Treating every pinning issue as “replace synchronized with another lock” often misses the larger architectural cause.

5.5 Good pinning language always names version, scenario, and conclusion

Any serious production writing on Loom should label pinning claims with three qualifiers: which JDK line they apply to, what kind of blocking scenario they refer to, and what conclusion the reader should draw. For example: “In JDK 21 to 23, blocking under some monitor-protected waits can materially reduce carrier release, so long remote waits inside synchronized regions deserve targeted diagnosis.” That is a better statement than “synchronized always pins.”

5.6 Minimal virtual-thread creation example

The following snippet has one narrow scenario: a request handler wants familiar ExecutorService usage while moving task execution onto virtual threads. The reason is that many teams still assume Loom requires a completely alien programming surface. The observation point is API compatibility, not production scalability. The production boundary is strict: this proves nothing about downstream budgets or safe admission.

try (var executor = Executors.newVirtualThreadPerTaskExecutor()) {

Future<String> result = executor.submit(() -> {

if (!Thread.currentThread().isVirtual()) {

throw new IllegalStateException("expected a virtual thread");

}

return "handled by " + Thread.currentThread();

});

System.out.println(result.get());

}5.7 Start the runbook from symptoms, not from theory

When diagnosing potential pinning, do not assume every Loom slowdown is a pinning issue. Start from the symptom surface: is throughput too low relative to in-flight task count, are carriers unusually occupied, are waits clustered around locks or native boundaries, is queueing appearing where it should not? Only after those questions should you decide whether monitor behavior, JNI, foreign calls, or third-party driver paths deserve deeper scrutiny.

5.8 The usual repair order is upgrade, shorten critical sections, then redesign boundaries

Once pinning or carrier hold problems are real, the repair priority should usually be: verify whether a newer supported JDK line changes the behavior, shorten or relocate long waits out of shared-state regions, and only then consider broader boundary redesign or synchronization replacement. That order keeps remediation risk lower while still respecting production reality.

6. Structured Concurrency: Turning Request Fan-Out into an Owned, Cancellable, Aggregatable Task Tree

6.1 Without structure, cheap tasks become cheap chaos

Virtual threads make it natural to run multiple child operations in one request. That is exactly why structured concurrency matters. If child tasks are cheap to create but have no clear owner, cancellation rule, timeout inheritance, or failure policy, direct-style concurrency can become easier to write and harder to govern.

6.2 Scope adds ownership, deadline, and failure policy

The real value of structured concurrency is that it turns request-internal fan-out into a task tree with ownership. A product-page request that fetches user data, orders, recommendations, and risk flags needs rules: what if one child fails, what if the parent deadline is consumed, what if a partial result is acceptable, what if a slow child is no longer valuable? A scope is not just API convenience. It is a place to encode those rules coherently.

6.3 Structured does not mean every child must succeed

Not every request fan-out should be “all or nothing.” Some flows are fail-fast, some are first-success-wins, some allow partial results, and some are bounded by a shared deadline. Structured concurrency is valuable because it makes those different policies visible and testable instead of scattering them across ad hoc catches and retries.

6.4 It improves both explainability and cleanup

Operationally, structured concurrency improves two things at once. First, it makes task relationships easier to explain in logs and traces. Second, it makes no-longer-valuable work easier to cancel. In a Loom service, that second gain matters a great deal because cheap waiting can otherwise permit a large amount of useless background work to accumulate after the user-visible request has already failed or timed out.

6.5 Conceptual structured-concurrency example

The following example represents a simple request-level fan-out to two dependencies where failure in either child should fail the whole parent. The reason this example matters is not the preview API syntax itself, but the governance shape: one parent scope, a single join point, shared failure semantics, and owned child lifetimes. The observation point is whether ownership, join, and failure propagation are visible in one place. The production boundary is that the exact API remains preview-dependent and must be validated for the target JDK and flags.

try (var scope = new StructuredTaskScope.ShutdownOnFailure()) {

var userTask = scope.fork(() -> userClient.fetch(userId));

var orderTask = scope.fork(() -> orderClient.fetchRecent(userId));

scope.join();

scope.throwIfFailed();

return new AccountView(userTask.get(), orderTask.get());

}6.6 Deadlines and cancellation determine whether Loom actually saves resources

Structured concurrency is most valuable when paired with deadline propagation and cancellation. Otherwise, a parent request may fail while child tasks keep consuming database connections, remote quota, or cache capacity. Under Loom, that waste is especially tempting because the code still looks clean and the waiting task itself is cheap. What matters is not cheap task representation but value-aware task lifetime.

6.7 Partial failure rules should be designed, not patched in afterward

Enterprise systems often have mixed result requirements. A recommendation panel may be optional while account data is mandatory. A dashboard may tolerate a missing analytics widget but not a missing permissions check. If those rules are not designed at the task-tree level, they usually reappear later as local exception patches that are difficult to monitor and reason about. Loom makes local fixes easier to write, which is why design discipline has to become stricter, not looser.

7. Observability and Diagnosis: What Thread Dumps, JFR, Metrics, and Traces Mean in the Loom Era

7.1 Thread dumps still matter, but thread count explains less

Loom does not eliminate existing tools. It changes what questions they answer best. Thread dumps still reveal concentration points: many virtual threads waiting on the same pool, the same dependency, the same lock region, or the same request shape. But a large virtual-thread population is not itself a problem. The key question is what those tasks are waiting on and whether that waiting is valuable.

7.2 JFR is more valuable because it turns “ordinary blocking” into inspectable behavior

JFR becomes even more useful under Loom because it helps separate kinds of waiting: lock contention, socket activity, carrier utilization, CPU samples, and lifecycle behavior. The most useful JFR mindset is not to chase one magical Loom event, but to ask operational questions: are carriers actually being reused well, is deep waiting growing under a specific dependency, is a timeout policy creating useless accumulation, is the system CPU-bound rather than wait-bound, and is the suspected pinning path visible in real execution traces?

7.3 Metrics must be organized around budgets rather than thread pools

The old trio of active threads, queue depth, and rejections is no longer enough. Loom-era metrics should include connection-borrow distributions, downstream in-flight counts, cancellation counts and effectiveness, timeout ratios by dependency, fan-out depth distributions, budget rejection counts, virtual-thread lifetime distributions, and carrier CPU behavior. These are closer to the actual bottleneck surface than raw thread count.

7.4 Tracing must answer which work still has user value

Tracing becomes especially important in fan-out heavy services. It should show not only where time is spent, but also which child work continues after the parent has lost value, which retries are rescuing real success versus amplifying waste, and which fallback paths are being activated under budget pressure. That is what makes tracing a governance tool rather than just a latency tool.

7.5 Resource waiting should be your first diagnostic lens

When latency grows under Loom, resource waiting is usually the best first lens: pool waits, connection acquisition, downstream RTT, retry multiplication, or ineffective bulkheads. Threads are the container of waiting, not always the cause. A Loom-aware diagnostic workflow therefore asks “What real budget is currently being consumed badly?” before it asks “How many threads do I have?”

7.6 JFR capture starting point

The following command represents a narrow production-reader job: capture a bounded JFR sample to inspect lock contention, waiting behavior, downstream stalls, or carrier occupancy. The reason it matters is that many Loom issues cannot be validated by static inspection alone. The observation point is the capture boundary and evidence artifact, not some promise that one command explains everything. The production boundary is that JFR complements, but does not replace, load testing, budget analysis, and service-level metrics.

jcmd <pid> JFR.start name=loom-profile settings=profile duration=120s filename=loom-profile.jfr7.7 Diagnosis order should be resource first, thread second, code detail third

A strong Loom diagnosis sequence usually starts with the resource budget that is degrading, then checks whether carriers or task counts are symptom or cause, and only then descends into local code details. This order is practical during incidents because it gets teams closer to a reversible control action: limit, degrade, reduce fan-out, tighten deadlines, or roll back.

7.8 Dashboards should be dependency-budget dashboards

Traditional dashboards often group by JVM resources, GC, CPU, thread pools, and error rate. Loom services still need those views, but they also need dependency-budget views: per-dependency wait time, in-flight count, timeout growth, retry count, fallback activation, cancellation rate, fan-out distribution, and the ratio between entry concurrency and deep demand. Without that, teams keep reading a new bottleneck surface through old instrumentation habits.

7.9 Early warning signals are often waits and failed cancellation, not error rate

Many Loom incidents produce useful warning signs before errors spike. Connection-borrow latency drifts upward, child work survives parent timeout, fallback use grows, retry counts climb, and low-value work occupies deep budgets longer than expected. These are better early alerts than “thread count high” in many Loom services.

7.10 Observability must span pre-release, release, and post-release windows

Loom observability is not only a runtime dashboard. It should begin in pre-release validation, continue through canary release, and remain active during long-window production observation. Some Loom problems only appear after a sustained high-load period or a mixed-workload window where online and batch behavior overlap. That is why observability should track the same budget language across all release phases.

8. Migration Strategy: How Servlet, Spring, JDBC, HTTP Clients, RPC, and Batch Systems Should Change Gradually

8.1 Start with I/O-heavy, bounded, rollback-friendly paths

The riskiest migration pattern is “Loom is easy, so let’s replace everything.” The safest pattern is the opposite: pick paths where waiting dominates, where direct-style code would clearly reduce complexity, where the dependency surface is known, and where rollback is easy. BFFs, aggregation endpoints, and some batch fan-out flows are often good first candidates.

8.2 The first migration task is boundary inventory, not executor replacement

Before changing execution style, teams should inventory existing thread pools, connection pools, retry behavior, timeout policies, fan-out depth, required versus optional downstream calls, and degradation rules. If those constraints stay implicit, Loom can make the code cleaner while making the system less governed.

8.3 Servlet and Spring MVC gain quickly, but can hide the scale of the change

Servlet and Spring MVC paths often benefit strongly because they already express request/response logic in direct style. The operational trap is assuming that a small source-code change implies a small architecture change. If the execution model changes, connection waits, fan-out amplification, and budget rejection behavior all need fresh validation.

8.4 JDBC is powerful under Loom, but easier to misuse

One of Loom’s most advertised wins is pairing virtual threads with blocking JDBC. That win is real only if the database remains explicitly protected. Database limits are about connections, transactions, locking, IOPS, and memory, not about JVM thread cost. If migration removes thread pressure but leaves query budgets implicit, the system merely hits the database ceiling harder and earlier.

8.5 HTTP and RPC clients need request-level budgets

HTTP/RPC integrations benefit from direct-style readability, but they also become easier to overuse. Connections, handshakes, retries, quota, and total request deadlines still have to be explicit. In services that call several downstream systems, request-level budgets often matter more after Loom than before because hidden fan-out becomes easier to write.

8.6 Batch and message-driven systems are vulnerable to task-amplification mistakes

Batch jobs and message consumers can also benefit, but they are especially vulnerable to “one record, one task” over-amplification if downstream budgets are not explicit. A bounded thread pool used to constrain damage accidentally. Loom removes that accidental constraint, so per-resource budgets must replace it deliberately.

8.7 Mixed architectures should coexist rather than convert ideologically

An edge API may use Loom, while stream processing remains event-driven and CPU-heavy report generation stays on bounded platform-thread execution. That is not architectural inconsistency. It is often the correct alignment of model to bottleneck.

8.8 Minimal semaphore example for downstream protection

The following snippet exists to show a narrow but important production point: direct-style virtual-thread code still needs an explicit downstream concurrency budget. The scenario is a service that wants the readability benefits of Loom while protecting a risk service from burst amplification. The reason is that request-level fan-out becomes easier under Loom, and therefore easier to misuse. The observation point is that the budget is explicit in the code, not implicit in a thread pool. The production boundary is that real systems also need deadlines, metrics, rejection policy, and fallback design around this pattern.

public final class RiskGateway {

private final Semaphore budget = new Semaphore(120);

private final RiskClient client;

public RiskResult check(String userId) throws Exception {

if (!budget.tryAcquire(100, TimeUnit.MILLISECONDS)) {

return RiskResult.degraded("risk budget exhausted");

}

try {

return client.fetch(userId);

} finally {

budget.release();

}

}

}8.9 A practical migration sequence template

For most teams, a workable migration sequence looks like this: choose one I/O-heavy path, inventory budgets and failure rules, design direct-style fan-out plus downstream protection together, validate in pre-production with load, release with canary-level deep metrics, and only then expand to more paths. This sequence does not optimize for speed. It optimizes for isolating learning and bounding incident scope.

8.10 Rollback conditions should be defined before the first release

Loom migrations often fail not as immediate functional errors, but as budget-position changes: worse pool waits, deeper queues, more no-value work, more downstream pressure, or degraded p99 without headline failures. That is why rollback triggers should be written before release: rising borrow latency, rising downstream timeout ratio, ineffective cancellation, unexplained carrier occupancy, or significant tail-latency deterioration without business benefit should all qualify as rollback conditions where appropriate.

8.11 Migration decisions should differ by business shape

A BFF, a transactional backend, a batch integration service, and a long-running event processor are not the same problem, and should not receive the same Loom answer. The more a service is dominated by request/response waiting and orchestration complexity, the stronger Loom’s case becomes. The more it is dominated by CPU or stream semantics, the weaker the case often is.

8.12 Platform teams should productize the boring Loom parts

If every team implements its own deadline propagation, budget rejection metrics, JFR guidance, fallback semantics, and rollback toggles, Loom becomes an inconsistency multiplier. Platform support should make these concerns easier, not optional. Standardized context propagation, common budget components, observability templates, and execution-model switches turn Loom from an isolated team trick into a reproducible organizational capability.

9. Benchmark: Which Loom Tests Matter and Which Conclusions Should Not Drive Production Guidance

9.1 Separate representation-cost tests from end-to-end throughput tests

Loom is easy to benchmark badly because its demo wins are visually impressive. Being able to park many waiting tasks proves that thread representation became cheaper. It does not prove that a production service can safely multiply its real end-to-end concurrency. Representation-cost benchmarks and end-to-end service benchmarks answer different questions and should never be conflated.

9.2 Freeze downstream budgets before comparing results

Any production-grade comparison should hold dependency budgets constant: connection-pool sizes, client settings, retries, timeouts, quota assumptions, hardware, and framework/JDK versions. If those move, the result stops being Loom evidence and becomes a mixed-change experiment.

9.3 Tail latency, error rate, and queueing matter more than averages

Because Loom often smooths entry behavior before deep overload appears, average latency can remain attractive while p95/p99 and downstream wait times are already drifting. Any claim about better throughput that ignores error rate, timeout growth, or queueing behavior is incomplete for production use.

9.4 Maintainability and explainability are part of the result

One real Loom gain is not only throughput but operational clarity: direct business call stacks, easier debugging, and lower callback complexity. Benchmarks that ignore these dimensions and report only micro numbers are often poor guides for long-lived system decisions.

9.5 Benchmark conclusions must use boundary language

A responsible conclusion sounds like: “Under this workload, with these dependency ceilings and this JDK/framework combination, the Loom variant reduced platform-thread occupancy and improved p95 in this concurrency band while preserving correctness.” An irresponsible conclusion sounds like: “Virtual threads are X times faster than thread pools.” Production guidance should prefer the former.

9.6 Good proof usually has three stages: lab, canary, long-window observation

The strongest Loom evidence usually comes in three stages: controlled lab validation, real-traffic canary observation, and sustained high-load or long-window production observation. Many Loom migrations look fine in stage one and become interesting only in stages two and three, where budget migration, cancellation effectiveness, and retry amplification become visible.

10. Production Checklist and Anti-Patterns: What Must Be Confirmed Before Release and What Usually Goes Wrong

10.1 Pre-release checks must cover version, budget, failure semantics, and rollback

A serious Loom release should verify supported JDK/framework/library combinations, downstream budgets, timeout and retry semantics, cancellation rules, observability coverage, and rollback procedure. “The code compiles and the endpoint still works” is not enough.

10.2 Four common anti-patterns

The first anti-pattern is treating virtual threads as unlimited threads. The second is using Loom as camouflage for CPU-bound problems. The third is assuming that direct style eliminates the need for backpressure. The fourth is changing execution without changing observability. All four arise from the same core mistake: confusing easier code with self-governing capacity.

10.3 Massive lock rewrites “to avoid pinning” are often the wrong reaction

Once teams learn about pinning, some try to replace every synchronized region in sight. That is often an overreaction. Validate first, localize the hot path, understand the version context, and only then decide whether lock replacement, boundary refactoring, or JDK upgrade is appropriate.

10.4 Loom makes no-value work easier to tolerate unless cancellation is real

Because waiting tasks are cheap to represent, systems may accidentally tolerate large volumes of work that no longer benefit the user: child calls surviving parent timeout, race losers continuing after a winner is known, or batch work consuming online budgets during peak windows. That is why cancellation and value-aware task shutdown deserve explicit treatment in any Loom service.

10.5 The Production Change Should Ship With an Auditable Evidence Packet

A production Loom path should usually ship with a compact evidence packet: target path description, applicable versions, budget map, entry versus downstream concurrency model, timeout and retry rules, cancellation behavior, load-test comparison, key JFR findings, dashboard updates, rollback conditions, and post-release observation scope. Without that, Loom adoption remains a technical preference rather than a governed production decision.

10.6 Incident runbook one: many virtual threads, low CPU, rising latency

This shape usually signals deep waiting rather than raw compute shortage. The first checks should be pool waits, HTTP connection acquisition, dependency RTT, retry inflation, cancellation effectiveness, and abnormal fan-out amplification. Only after resource-side symptoms are exhausted should pinning or scheduler behavior become the primary suspect.

10.7 Incident runbook two: the downstream system is melting, but the entry point still looks calm

This usually means Loom removed an earlier coarse admission barrier and exposed deeper budgets directly. Recovery often starts with tighter entry limits, reduced fan-out, non-critical call disablement, or degraded paths rather than blindly expanding the downstream dependency.

10.8 Incident runbook three: code became simpler, but observability became worse

That paradox is real when teams keep old thread-centric dashboards after a Loom migration. If the system now hides overload deeper in pools and dependencies, but monitoring still watches mainly thread counts, diagnosis quality can regress even while source code gets cleaner. The fix is not to abandon Loom. The fix is to modernize the observability model.

10.9 Final anti-pattern: treating Loom as a performance feature rather than a governance contract

All of the previous anti-patterns compress into one larger mistake: treating Loom as performance theater rather than a governance contract. Synchronous blocking became cheaper, so resource governance must become more explicit. Direct-style code returned, so failure semantics and task lifetime must be designed earlier. Entry behavior became smoother, so deep budgets must become more visible. That is the true production meaning of virtual threads.

10.10 A simplified decision matrix: when Loom is the first candidate and when it should wait

If the path is I/O-heavy, orchestration-heavy, version-verifiable, rollback-friendly, and suffering from asynchronous complexity, Loom is often a strong candidate. If the path is CPU-heavy, stream-native, black-box-native, or observability-poor, Loom may be secondary or premature. The matrix matters because it turns “why are we doing this?” into a traceable engineering answer instead of a trend-driven one.

10.11 Three common production scenarios have very different Loom value

A BFF aggregation layer, a low-latency compute service, and a batch integration workflow are three very different concurrency problems. Loom often fits the first strongly, may contribute little to the second, and can help or harm the third depending on budget discipline. Treating a win in one scenario as universal guidance for the other two is a frequent organizational mistake.

10.12 Seven review questions that matter more than API preference

What dominates the path: waiting or CPU? What downstream budget will be amplified first? How many child tasks may one request create? How does deadline propagation work? Which failures can degrade and which must fail the whole request? How is deep waiting prevented when budgets are exhausted? What is the rollback trigger? If these questions do not have clear answers, the Loom plan is not yet production-ready.

10.13 Incident review should record budget migration, not only the slow point

Good postmortems do more than say “this pool saturated.” They explain which old coarse limit used to stop the traffic earlier, where the demand moved after Loom, which metrics failed to warn in time, how retries or fallback amplified pressure, and whether cancellation or rollback behaved as intended. That is the learning loop that prevents the same class of failure in a different path later.

10.14 The long-term goal is not “Loom everywhere,” but direct style plus explicit budgets

A mature organization should not measure success by counting how many services “use virtual threads.” It should measure success by how many Loom-backed paths are still governed by clear budgets, deadlines, cancellation, observability, and rollback evidence. Some services may never need Loom. Some may use it only at the edge. That is normal. The strategic goal is not ideological uniformity. It is lower accidental complexity without lower governance quality.

10.15 Final judgment: Loom gives Java permission to choose simpler code again, but not permission to skip governance

For years, many Java teams were forced into a tradeoff: keep direct synchronous code and pay high platform-thread waiting cost, or reduce wait cost and accept much more asynchronous control-flow complexity. Loom breaks that tradeoff and gives teams permission to choose simpler business code again. But permission is not safety. The feature creates the opportunity for better systems; it does not create those systems automatically. Budgets, backpressure, structured concurrency, cancellation, diagnostics, observability, and rollback still decide whether the result is production-grade.

10.16 Role-based delivery: architects, platform teams, service teams, and operations each owe a different artifact

To make Loom sustainable, the migration cannot be reduced to “replace the executor.” Architects owe the boundary decision: which paths fit direct-style I/O, which should stay reactive or message-driven, which dependency budgets will be amplified, and which failure modes must be rehearsed before release. Platform teams owe default capabilities: virtual-thread execution templates, request-level budget primitives, deadline propagation rules, JFR collection guidance, thread-dump interpretation notes, dashboard templates, and rollback switches. Service teams owe path-level evidence: fan-out limits, downstream concurrency budgets, failure semantics, cancellation propagation, load-test results, gray-rollout windows, and rollback steps. Operations teams owe runtime evidence: wait location, rejection location, no-value work, downstream budget exhaustion, retry amplification, and cancellation effectiveness.

Without those role-specific artifacts, Loom remains a local experiment. One service may report success, another may report that Loom melted a database, and a third may report that pinning was irrelevant. The difference is often not the runtime feature itself; it is the maturity of budget, observation, and rollback discipline around it. Role-based delivery turns a runtime option into a platform contract.

10.17 Platform templates should package the default safe path, not only the API

Many platforms start new capabilities by publishing a starter or sample project. That is useful, but for Loom it is not enough. A production-grade template should package entry concurrency, child-task concurrency, deadline propagation, cancellation, downstream budgets, exception aggregation, fallback semantics, JFR events, log correlation, and metric names into the default path. Service teams can change those defaults, but the change should be explicit.

The template must also avoid false safety. If it gives every request a virtual thread but does not define request fan-out limits, it only moves risk into business code. If it demonstrates structured concurrency but does not define partial failure, timeout, and cancellation policy, it makes scope syntax look safer than it is. If it says “protect downstreams” only in prose but ships no metric or budget primitive, the protection will likely fail during incidents. The template should package a safe path, not a syntax shortcut.

10.18 Rollout in stages: shadow validation, small production gray, then budget-based expansion

A Loom migration should not cover an entire business chain at once. The first stage is shadow validation. The goal is not to prove performance gain; it is to verify dependency compatibility, context propagation, log correlation, JFR visibility, cancellation semantics, and rollback switches. The second stage is a small production gray rollout. The goal is to see where waiting moves once the old thread-pool bottleneck disappears: which dependency budget tightens first, which timeout composition becomes visible, and which retry path amplifies deeper pressure. The third stage is budget-based expansion, where every increase in coverage is tied to downstream capacity evidence.

This order deliberately proves control before proving benefit. Many failed migrations are not cases where Loom had no value. They are cases where the team rushed to throughput, thread count, and aesthetic code wins before proving context, cancellation, observability, and rollback. The safer production order is: prove the system can be controlled, then prove it is beneficial; prove failure can retreat, then prove success can expand; prove dependency budgets can absorb the change, then allow more entry traffic to flow through it.

10.19 Coexist with reactive, message-driven, and batch models instead of replacing them

Loom does not remove the value of reactive, message-driven, or batch models. Reactive pipelines still fit long-lived streams, explicit backpressure, and event composition. Message-driven systems still fit smoothing, asynchronous decoupling, retry buffering, and eventual consistency. Batch systems still fit data windows, throughput-oriented jobs, and replayable work. Loom’s strength is readable blocking I/O orchestration; it is not a universal concurrency answer.

Most enterprise systems will remain mixed. An aggregation edge may use virtual threads, stream processing may remain reactive or message-based, background processing may remain batch-oriented, and low-latency compute may continue to focus on CPU, layout, and lock behavior. The real design work is boundary translation: when a virtual-thread request enters a queue, how does deadline become message expiration or consumption budget; when a reactive stream calls a synchronous service, how does backpressure become rejection or fallback; when a batch job calls online dependencies, how is batch concurrency constrained by online budgets? Without those translations, coexistence becomes a new complexity source.

10.20 Success metrics: measure governance quality, not adoption count

Counting how many services enabled virtual threads is a weak success metric. It only says the API is present; it does not say the system is more reliable, more maintainable, or cheaper to operate. Stronger metrics include how many paths have request-level budgets, how many downstreams have explicit concurrency limits, how many dashboards expose wait location, how many gray rollouts define rollback signals, how many postmortems record budget migration, how many services can stop no-value work after deadline, and how many templates include JFR and tracing evidence by default.

Those metrics are less flashy than adoption rate, but they match enterprise engineering reality. Loom’s long-term value is not that the organization can claim fast feature adoption. It is that more services can express complex I/O with lower cognitive cost while preserving budget governance, failure governance, and observability quality. The success criterion is not “we used Loom.” It is “simpler code still preserves system boundaries.”

10.21 ADRs should record budget migration, not only Loom adoption

When a team adopts virtual threads on a path, the ADR should not only say “use Loom to improve concurrency.” That statement has little audit value because it does not say what the old bottleneck was, where pressure will move, or how the system knows it did not push risk deeper. A useful ADR should record four things. First, what implicit guardrail existed in the old model: Tomcat threads, business executors, reactive backpressure, message-consumer concurrency, or database pool limits. Second, after migration, which guardrails remain, which are replaced, and which move into request-level budgets. Third, which dependency or resource is expected to become the next first bottleneck. Fourth, which signals trigger rollback.

This kind of ADR also explains why the team did not “use Loom everywhere.” One service may use virtual threads only in the aggregation edge because the message side still needs batch backpressure. Another path may stay unchanged because the main cost is CPU. A third path may migrate and then roll back because a third-party API cannot absorb deeper concurrency. Capturing these decisions creates a reviewable history of concurrency judgment rather than a shallow adoption inventory.

10.22 Platform review should inspect negative cases, not only the happy path

Loom proposals are easy to present through success stories: shorter code, fewer callbacks, better-looking load-test numbers, and thread count no longer being the visible bottleneck. Platform review must require negative cases. What happens if the downstream pool is exhausted? How quickly do child tasks release resources after parent cancellation? If one dependency slows down, does the system create no-value work? If JFR does not record the expected pinning or wait evidence, is there a second diagnostic path? If p99 worsens during gray rollout while average latency improves, how will the team interpret the tradeoff?

Negative-case review exposes paths that are elegant but not governed. Most Loom risks do not appear on the happy path. They appear in failure, cancellation, retry, fallback, and observability gaps. A mature platform should turn those negative cases into review questions instead of hoping every service team remembers them from experience. Only when negative cases are explainable does a Loom proposal become a production change rather than a technical demo.

11. Conclusion: Loom’s Value Is Maintainable Synchronous Code, but Production Safety Comes from Explicit Concurrency Governance

Loom’s most practical value is not the slogan of lightweight threads. It is that many Java services can once again express complex I/O-heavy business flows in direct, readable code without paying the full historical cost of blocking platform threads. That is a major engineering gain for teams that have long carried callback-heavy or operator-heavy orchestration only because waiting used to be expensive.

But any narrative that says “thread cost is solved, so high concurrency is solved” is misleading. Production safety still comes from budgets, resource protection, backpressure, timeouts, cancellation, version-aware diagnostics, observability, and rollback. Loom simply removes one accidental proxy for scarcity and forces architects to govern the real scarce things more honestly.

The safest long-term reading is therefore this: treat virtual threads as a first-class default candidate for direct-style I/O services, not as the only correct model; treat structured concurrency as a task-lifecycle discipline, not just as nicer syntax; treat pinning as a versioned, evidence-driven carrier-utilization topic, not as a one-line taboo; treat benchmarks as bounded claims, not marketing numbers; and treat migration as resource-boundary redesign, not as executor replacement.

When those conditions hold, Loom can materially improve maintainability, diagnosis, and I/O concurrency economics. When they do not, it merely moves old overload problems into deeper parts of the system. Loom is not the end of concurrency governance. It is the point where concurrency governance becomes impossible to postpone.

References

- JEP 444: Virtual Threads, https://openjdk.org/jeps/444

- JEP 491: Synchronize Virtual Threads without Pinning, https://openjdk.org/jeps/491

- OpenJDK Loom Project, https://openjdk.org/projects/loom/

- Structured Concurrency preview JEP line, including JEP 453 / 462 / 480 / 499 / 505 / 525

- Official JDK release notes and versioned documentation for post-JDK-21 Loom behavior

Series context

You are reading: Java Core Technologies Deep Dive

This is article 3 of 8. Reading progress is stored only in this browser so the full series page can resume from the right entry.

Series Path

Current series chapters

Chapter clicks store reading progress only in this browser so the series page can resume from the right entry.

- Java Memory Model Deep Dive: From Happens-Before to Safe Publication A production-grade deep dive into JMM, happens-before, volatile, final fields, optimistic locking, memory barriers, cache coherence, lock semantics, HotSpot implementation, and concurrency diagnostics.

- Modern Java Garbage Collection: Production Judgment, Evidence Collection, and Tuning Paths Use symptoms, GC logs, JFR, container memory, and rollback discipline to choose and tune G1, ZGC, Shenandoah, Parallel GC, and Serial GC without cargo-cult flags.

- Concurrency Governance with Virtual Threads in Production Systems Understand throughput, blocking, resource pools, downstream protection, pinning, structured concurrency, observability, and migration boundaries for Project Loom.

- Valhalla and Panama: Java's Future Memory and Foreign-Interface Model Separate delivered FFM API capabilities from evolving Valhalla value-type work, and reason about object layout, data locality, native interop, safety boundaries, and migration governance.

- Java Cloud-Native Production Guide: Runtime Images, Kubernetes, Native Image, Serverless, Supply Chain, and Rollback A production-oriented Java cloud-native guide covering runtime selection, container resources, Kubernetes contracts, Native Image boundaries, Serverless, supply chain evidence, diagnostics, governance, and rollback.

- Spring AI and LangChain4j: Enterprise Java AI Applications and AI Agent Architecture A production-grade guide to Spring AI, LangChain4j, RAG, tool calling, memory, governance, observability, reliability, security, and enterprise AI operating boundaries.

- JIT and AOT: From Symptoms to Diagnosis to Optimization Decisions A production decision guide for HotSpot, Graal, Native Image, PGO, and JVM diagnostics.

- Java Ecosystem Outlook: JDK 25 LTS, JDK 26 GA, and JDK 27 EA An enterprise architecture view of Java's next decade: version strategy, roadmap status, ecosystem boundaries, cloud-native operations, AI governance, and performance evolution.

Reading path

Continue along this topic path

Follow the recommended order for Java instead of jumping through random articles in the same topic.

Next step

Go deeper into this topic

If this article is useful, continue from the topic page or subscribe to follow later updates.

Loading comments...

Comments and discussion

Sign in with GitHub to join the discussion. Comments are synced to GitHub Discussions