Series · AI programming assessment

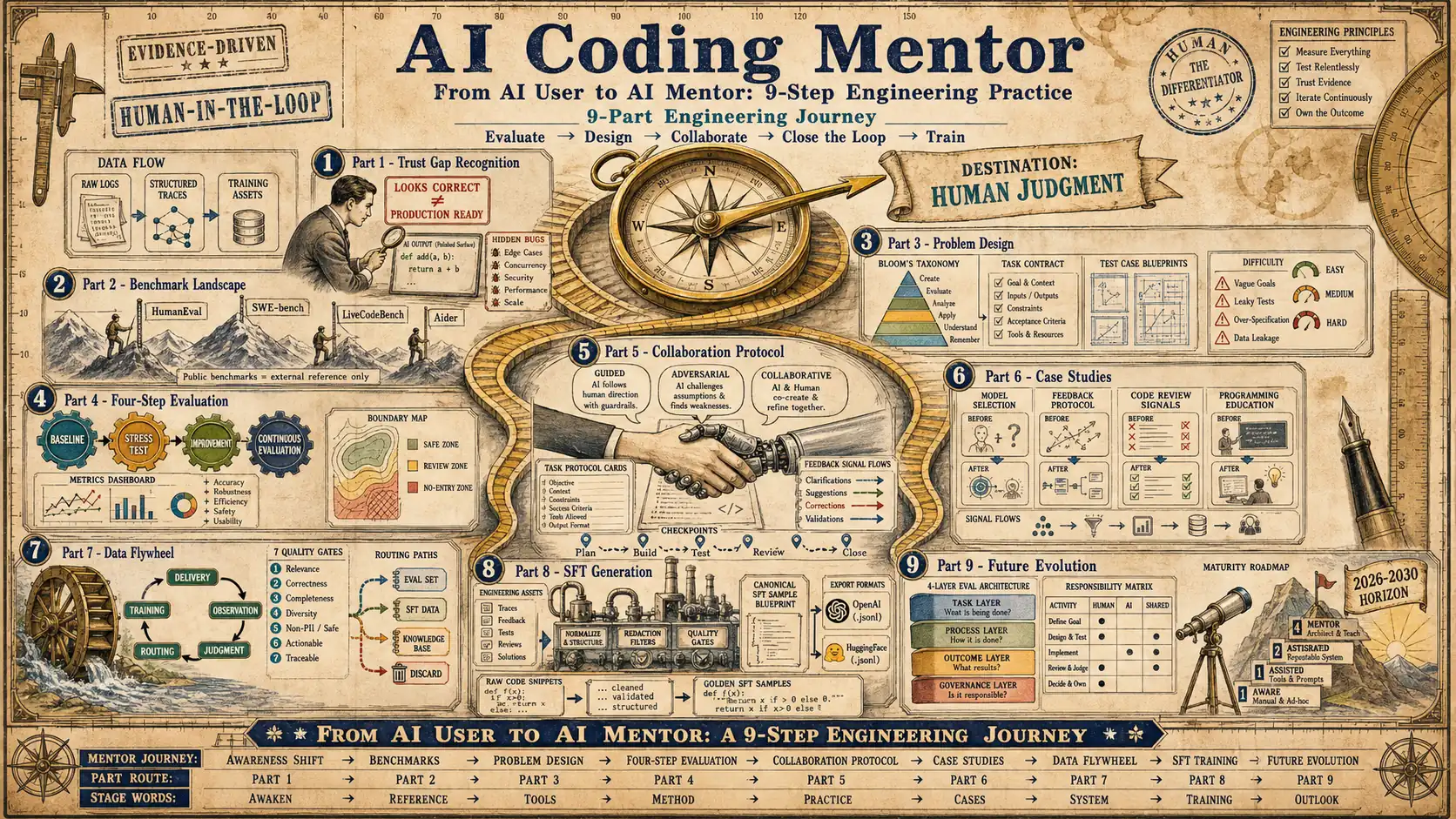

AI Coding Mentor Series

Systematic interpretation around AI programming assessment, problem design, collaboration models, case studies, and SFT data generation.

Progress is stored in this browser only; without saved progress, reading starts from chapter one.

- Status

- Completed

- Difficulty

- Intermediate

- Chapters

- 9/9

- Read time

- 160 min

Guide

Series guide

Understand the reading promise, main path, and reference chapters before entering individual articles.

This series treats AI coding as an engineering education and evaluation problem, not just a model leaderboard problem. The central question is how a team can observe, guide, and improve coding agents with reviewable tasks, mentor feedback, and reusable delivery evidence.

Who should read this series?

- Engineers who use coding agents but want better task design and review discipline.

- Tech leads who need a repeatable model-evaluation process for real code work.

- Teams that want to turn collaboration traces into SFT or feedback data.

What this series covers

The series starts from the mentor role, then moves through benchmark interpretation, problem design, evaluation loops, collaboration patterns, case studies, system building, SFT data generation, and future outlook. Each part adds one layer to the same operating model: make agent capability observable, make feedback specific, and make delivery evidence reusable.

Recommended reading order

Read Part 1 and Part 2 first to understand why public benchmarks are only a starting point. Continue with Part 3 and Part 4 to learn how to design tasks and evaluation loops. Use Part 5 to Part 8 as the practical section for collaboration protocols, case analysis, system architecture, and training-data generation.

Series Path

Read by chapter

Chapter clicks store reading progress only in this browser so the series page can resume from the right entry.

- Why do you need to be a coding mentor for AI? When AI programming assistants become standard equipment, the real competitiveness is no longer whether they can use AI, but whether they can judge, calibrate and constrain the engineering output of AI. This article starts from trust gaps, feedback protocols, evaluation standards and closed-loop capabilities to establish the core framework of "Humans as Coding Mentors".

- Panorama of AI programming ability evaluation: from HumanEval to SWE-bench, the evolution and selection of benchmarks Public benchmarks are not a decoration for model rankings, but a measurement tool for understanding the boundaries of AI programming capabilities. This article starts from benchmarks such as HumanEval, APPS, CodeContests, SWE-bench, LiveCodeBench and Aider, and explains how to read the rankings, how to choose benchmarks, and how to convert public evaluations into the team's own Coding Mentor evaluation system.

- How to design high-quality programming questions: from question surface to evaluation contract High-quality programming questions are not longer prompts, but assessment contracts that can stably expose the boundaries of abilities. This article starts from Bloom level, difficulty calibration, task contract, test design and question bank management to explain how to build a reproducible question system for AI Coding Mentor.

- Four-step approach to AI capability assessment: from one test to continuous system evaluation Serving as a coding mentor for AI is not about doing a model evaluation, but establishing an evaluation operation system that can continuously expose the boundaries of capabilities, record failure evidence, drive special improvements, and support collaborative decision-making.

- Best Practices for Collaborating with AI: Task Agreement, Dialogue Control and Feedback Closed Loop The core skill of being a Coding Mentor for AI is not to write longer prompt words, but to design task protocols, control the rhythm of conversations, identify error patterns, and precipitate the collaboration process into verifiable and reusable feedback signals.

- Practical cases: feedback protocol, evaluation closed loop, code review and programming education data Case studies should not stop at “how to use AI tools better”. This article uses four engineering scenarios: model selection evaluation, feedback protocol design, code review signal precipitation, and programming education data closed loop to explain how humans can transform the AI collaboration process into evaluable, trainable, and reusable mentor signals.

- From delivery to training: How to turn AI programming collaboration into a Coding Mentor data closed loop The real organizational value of AI programming assistants is not just to increase delivery speed, but to precipitate trainable, evaluable, and reusable mentor signals in every requirement disassembly, code generation, review and revision, test verification, and online review. This article reconstructs the closed-loop framework of AI training, AI-assisted product engineering delivery, high-quality SFT data precipitation, and model evaluation.

- From engineering practice to training data: a systematic method for automatically generating SFT data in AI engineering Following the data closed loop in Part 7, this article focuses on how to process the screened engineering assets into high-quality SFT samples and connect them to a manageable, evaluable, and iterable training pipeline.

- Future Outlook: Evolutionary Trends and Long-term Thinking of AI Programming Assessment As the final article in the series, this article reconstructs the future route of AI Coding Mentor from the perspective of engineering decision-making: how evaluation objects evolve, how organizational capabilities are layered, and how governance boundaries are advanced.